Le mois dernier en matière de gouvernance de l’IA

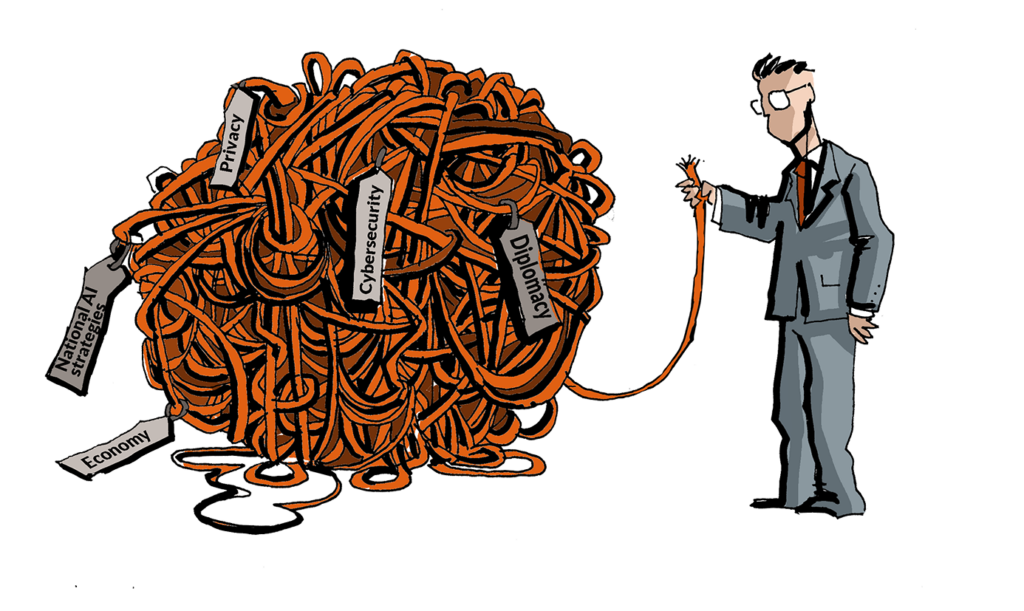

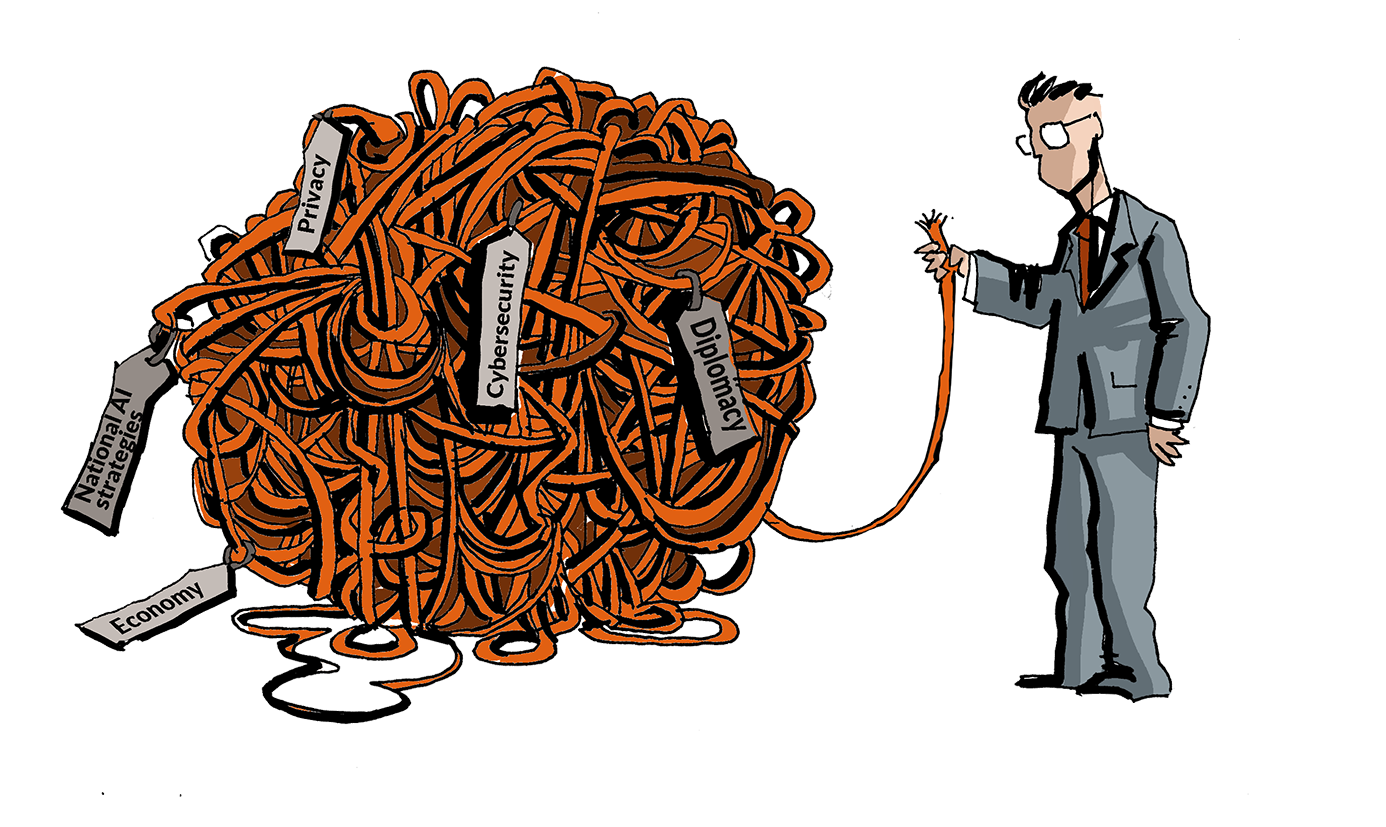

Gouvernance mondiale de l’IA

Le Vatican a mis en place une Commission interdicastérielle sur l’intelligence artificielle, approuvée par le pape Léon XIV, afin de coordonner les travaux sur les implications des technologies d’IA en pleine évolution.

À l’ONU, les préparatifs s’accélèrent en vue du premier Dialogue mondial sur la gouvernance de l’IA, qui se tiendra à Genève les 6 et 7 juillet 2026. L’organisation a invité les États membres et les parties prenantes à désigner des coprésidents pour les discussions thématiques et à participer au premier Dialogue mondial sur la gouvernance de l’IA à Genève, prévu les 6 et 7 juillet 2026. Les discussions s’articuleront autour de quatre thèmes, chacun étant coprésidé par un État membre et un représentant des parties prenantes, dans le but de favoriser les échanges multipartites sur les expériences, les meilleures pratiques et la coopération en matière de politiques. Les gouvernements sont invités à désigner des représentants de haut niveau, tandis que les parties prenantes sont encouragées à désigner des experts chevronnés compétents sur le thème sélectionné.Par ailleurs, le Bureau des Nations unies pour les technologies numériques et émergentes a lancé le Laboratoire de gouvernance de l’IA pour l’humanité à Valence afin de renforcer la coopération internationale en matière de gouvernance de l’IA. Ce Laboratoire s’attachera à améliorer l’interopérabilité entre les cadres de gouvernance nationaux et régionaux et à soutenir la mise en œuvre pratique dans toutes les régions et tous les secteurs. Ses travaux porteront notamment sur la mobilisation de réseaux, l’analyse comparative des politiques et le développement d’outils de coopération pour la gouvernance de l’IA.

Partenariats

L’Australie et le Japon ont renforcé leur coopération en matière de sécurité économique et de technologies critiques.

L’UE et le Japon ont fait progresser leurs travaux conjoints sur la gouvernance de l’IA et les flux transfrontaliers de données. L’Inde et la France ont discuté d’un renforcement de leur coopération dans les domaines de l’espace, de l’IA, des mathématiques appliquées et des technologies de pointe. Le Canada et l’Espagne ont signé un protocole d’accord visant à consolider leur collaboration en matière de développement et d’adoption de l’IA, ainsi que d’innovation numérique. La Corée du Sud et les Pays-Bas ont discuté d’une coopération dans les domaines des semi-conducteurs et de l’IA. La Corée du Sud et les Émirats arabes unis ont élargi leur coopération en matière d’infrastructures d’IA et de semi-conducteurs par le biais d’un nouveau forum bilatéral sur l’investissement axé sur les écosystèmes d’IA, les centres de données et les technologies de pointe. Les États-Unis et la Suède ont signé un nouveau protocole d’accord axé sur la coopération en matière de technologies stratégiques, de recherche et d’innovation industrielle. La Russie et la Chine ont convenu de coordonner leurs positions sur les questions scientifiques et politiques liées à l’IA au sein des organisations internationales. Même les rivaux géopolitiques semblent rouvrir prudemment le dialogue sur la gouvernance de l’IA. Les États-Unis et la Chine envisagent d’entamer des discussions officielles sur l’IA, reconnaissant ainsi qu’un certain degré de coordination pourrait devenir inévitable à mesure que les systèmes de pointe gagnent en capacité et en importance à l’échelle mondiale.

Stratégies nationales en matière d’IA

En mai, les gouvernements ont continué à affiner leurs programmes nationaux en matière d’IA.

La Maison Blanche a publié un décret visant à renforcer la cybersécurité américaine grâce à une coopération plus étroite avec le secteur de l’IA, tout en conservant une approche modérée de sa réglementation afin de préserver le leadership technologique américain. Ce décret enjoint Ce décret charge les agences fédérales d’accélérer la protection des réseaux gouvernementaux, d’élargir l’accès aux outils de cybersécurité basés sur l’IA et d’améliorer le soutien apporté aux opérateurs d’infrastructures critiques, notamment les hôpitaux, les banques et les services publics. Le décret établit également un centre d’échange volontaire sur la cybersécurité en matière d’IA afin de coordonner la détection des vulnérabilités et les efforts de correction au sein du gouvernement et de l’industrie. En outre, les autorités fédérales mettront au point un processus classifié pour évaluer les systèmes d’IA avancés et identifier les « modèles de pointe couverts » dotés de capacités cybernétiques significatives. Les développeurs seront encouragés, sans y être tenus, à fournir au gouvernement un accès anticipé à ces modèles avant leur diffusion à plus grande échelle.

La Papouasie-Nouvelle-Guinée a défini une approche nationale de l’IA axée sur la souveraineté des données, des infrastructures publiques fiables et une nouvelle législation, s’appuyant sur quatre éléments du cadre : le renforcement des fondements numériques existants tels que SevisPass et SevisDEx, la création d’un registre national de l’IA, l’adoption d’une gouvernance souveraine des données, et l’introduction de nouvelles lois, notamment une loi nationale sur l’intelligence artificielle et une loi sur la gouvernance et la protection des données.

En Afrique du Sud, le ministère des Communications et des Technologies numériques a mis en place un groupe d’experts indépendant composé de sept membres afin d’examiner un projet de politique nationale en matière d’IA, à la suite du retrait de la version précédente en raison de références fictives et potentiellement générées par l’IA. Ce nouveau groupe a été chargé de réviser ou de supprimer les contenus problématiques et de corriger les citations ; la politique révisée devrait être approuvée par le Conseil des ministres d’ici novembre 2026 et faire l’objet d’une consultation publique prévue pour janvier 2027.

Par ailleurs, Singapour a mis à jour sa stratégie nationale en matière d’IA (NAIS), définissant 10 priorités actualisées couvrant la gouvernance, l’adoption par l’industrie, la recherche, les talents, le calcul, les données et la coopération internationale. Cette stratégie met l’accent sur la transformation sectorielle par le biais de missions nationales en matière d’IA, une intégration plus poussée de l’IA dans l’ensemble du secteur public, l’expansion de la recherche sur l’ensemble de la pile IA, et le développement de talents compétents en IA aux côtés de spécialistes. Elle met également l’accent sur une informatique économe en ressources, une gouvernance des données renforcée, et le positionnement de Singapour en tant que pôle de confiance pour la collaboration internationale en matière d’IA.

Cybersécurité

Les autorités du Royaume-Uni, de Singapour, d’Australie et de la Suisse ont averti que l’IA pourrait accélérer la découverte et l’exploitation des vulnérabilités logicielles, permettre de nouvelles formes d’attaques par apprentissage automatique adversaire et accroître l’ampleur et la sophistication des cyberopérations. Les régulateurs financiers du Royaume-Uni et d’Australie ont de même exhorté les organisations à renforcer la gouvernance, la gestion des vulnérabilités, la surveillance des tiers et les capacités de reprise, en prévision de l’émergence d’acteurs malveillants dotés d’une IA plus performante.

Les gouvernements réagissent en mettant en place de nouvelles institutions, des cadres réglementaires et des capacités de défense.

Les Émirats arabes unis ont lancé un laboratoire national de sécurité de l’IA axé sur la certification et la cyber-résilience, tandis que l’Australie a créé un institut de sécurité de l’IA chargé d’évaluer les systèmes d’IA émergents et de soutenir les régulateurs. Le Royaume-Uni a annoncé son intention de développer une capacité nationale de cyberdéfense alimentée par l’IA, basée sur des agents logiciels autonomes capables d’identifier et de répondre aux menaces à la vitesse d’une machine. L’Australie et le Royaume-Uni ont également renforcé la coopération entre leurs instituts respectifs de sécurité de l’IA, en mettant particulièrement l’accent sur les risques liés à l’IA de pointe et la cybersécurité.

Au niveau international, les efforts se concentrent de plus en plus sur les normes communes et la coordination. Le groupe de travail du G7 sur la cybersécurité a publié des lignes directrices pour une nomenclature logicielle de l’IA afin d’améliorer la transparence et la cybersécurité tout au long des chaînes d’approvisionnement de l’IA. Les ministres du Numérique et de la Technologie du G7 se sont engagés à faire progresser la coopération sur les cadres d’évaluation de l’IA, les lignes directrices en matière de marchés publics et la détection des contenus générés par l’IA.[1] Ils ont également appelé à des principes plus clairs sur l’ouverture de l’IA, à un soutien accru à la culture numérique et au développement des compétences en IA, ainsi qu’à des mesures ciblées pour aider les PME à adopter l’IA

L’intersection entre l’IA et la sécurité nationale devient également plus visible. La Corée du Sud a organisé un examen interministériel des cybermenaces liées à l’IA, réunissant des agences scientifiques, de défense et de renseignement afin de coordonner les réponses. Aux États-Unis, une cour d’appel fédérale a entendu les arguments d’Anthropic contestant sa désignation comme une menace pour la sécurité nationale au sein de la chaîne d’approvisionnement, soulignant les questions émergentes concernant la manière dont les gouvernements évaluent et réglementent les entreprises d’IA dans le cadre de la sécurité nationale.

Parallèlement, les capacités de l’IA s’étendent de plus en plus au-delà du domaine numérique. La Corée du Nord a annoncé des essais de missiles de croisière et de systèmes d’artillerie de précision pilotés par l’IA, marquant une nouvelle étape dans les efforts du pays pour moderniser ses forces armées traditionnelles. Les médias d’État ont indiqué que ces systèmes intègrent des technologies de ciblage assisté par l’IA et de navigation autonome conçues pour la guerre contemporaine.

Droits de l’Homme

Les autorités de protection de la vie privée examinent de plus en plus attentivement la manière dont les systèmes d’IA générative collectent, traitent et divulguent les données à caractère personnel, tandis que les mesures de contrôle et les recommandations se multiplient dans toutes les juridictions.

Les autorités fédérales et provinciales canadiennes chargées de la protection de la vie privée ont constaté que certains aspects de la collecte, de l’utilisation et de la divulgation de renseignements personnels par OpenAI via ChatGPT n’étaient pas conformes aux lois applicables en matière de protection de la vie personnelle dans le secteur privé. Les autorités ont estimé que la collecte initiale par l’entreprise de données à caractère personnel provenant de sites web accessibles au public et de sources tierces sous licence, à des fins d’entraînement des modèles, était trop large et donc inappropriée, compte tenu de l’ampleur, de la sensibilité et de l’inexactitude potentielle des données concernées, ainsi que des limites des mesures préventives en place à l’époque.

Le Royaume-Uni a mis en vigueur une réglementation exigeant que le commissaire à l’information élabore un code de bonnes pratiques concernant le traitement des données à caractère personnel en matière d’IA et de prise de décision automatisée. Ce code doit également inclure des recommandations sur les bonnes pratiques en matière de traitement des données à caractère personnel des enfants.

La CNIL, l’autorité française de protection des données, et la Commission sud-coréenne de protection des informations personnelles ont élaboré conjointement une affiche visant à sensibiliser le public aux risques liés à la vie privée dans le cadre de l’IA générative. Cette nouvelle affiche, intitulée « IA générative et vie privée », fournit des conseils pratiques sur la manière dont les utilisateurs peuvent protéger leurs données à caractère personnel avant, pendant et après l’utilisation de services d’IA générative.

Juridique

En Europe, les décideurs politiques ont continué à affiner l’architecture de mise en œuvre de la loi sur l’IA. La Commission européenne a publié un projet de lignes directrices sur les systèmes d’IA à haut risque, destiné à aider les fournisseurs et les exploitants à déterminer si les systèmes d’IA relèvent de la catégorie « à haut risque » prévue par la loi. Les institutions de l’UE sont également parvenues à un accord provisoire sur le dernier paquet omnibus, qui prolonge les délais de mise en œuvre, simplifie certaines obligations et renforce les pouvoirs du Bureau de l’IA. La Commission a également nommé de nouveaux organes d’experts, le groupe scientifique et le forum consultatif, afin de fournir une expertise indépendante au Bureau de l’IA de la Commission et aux autorités nationales chargées de superviser le respect du règlement.

L’Espagne a approuvé un projet de loi organique visant à intégrer la loi européenne sur l’IA dans son cadre juridique national. La proposition désigne l’agence nationale de surveillance de l’IA comme autorité centrale, tout en attribuant des responsabilités de surveillance sectorielles aux régulateurs financiers, de protection des données et judiciaires.

Les questions relatives à l’IA et à la propriété intellectuelle ont également retenu l’attention internationale. Au niveau de l’UE, la Commission européenne a lancé une consultation visant à déterminer s’il convient d’actualiser les règles en matière de droit d’auteur afin de tenir compte de l’évolution de l’économie numérique, y compris des défis posés par l’IA générative. Cette révision permettra d’évaluer l’efficacité de la directive de 2019 sur le droit d’auteur et d’examiner si les règles existantes restent adaptées à leur objectif, alors que les systèmes d’IA s’appuient de plus en plus sur des contenus protégés par le droit d’auteur. La Corée du Sud a publié un guide en anglais sur l’utilisation équitable de contenus protégés par le droit d’auteur pour l’entraînement de modèles d’IA générative et a fait part de son intention de jouer un rôle plus actif dans les discussions internationales sur l’application des exceptions au droit d’auteur au développement de l’IA. Parallèlement, l’Office de la propriété intellectuelle de Singapour et l’Office japonais des brevets ont lancé une initiative de coopération sur l’utilisation de l’IA dans l’examen des brevets, comprenant des échanges techniques et la formation des examinateurs visant à renforcer la qualité et la cohérence des processus d’examen des brevets

Parallèlement aux réformes réglementaires, les gouvernements élaborent également des cadres pour le déploiement de l’IA dans le secteur public. La Nouvelle-Zélande a publié des lignes directrices encourageant les régulateurs publics à adopter l’IA pour des tâches administratives à faible risque tout en maintenant un contrôle humain et une responsabilité. Ces lignes directrices mettent en avant les utilisations à faible risque, notamment le tri des dossiers, la hiérarchisation des priorités et la validation de données structurées.

Économie

Les institutions et les entreprises sont de plus en plus confrontées à la manière dont l’IA va remodeler les marchés du travail, les systèmes de compétences et les écosystèmes des plateformes, les divisions émergentes entre les politiques et l’industrie devenant de plus en plus visibles.

En matière de réglementation des plateformes, Meta a décidé de contester une décision de l’UE qui obligerait WhatsApp à permettre l’interopérabilité avec des chatbots IA concurrents. Cette affaire reflète la pression réglementaire croissante en Europe visant à ouvrir les plateformes de messagerie dominantes aux services IA tiers

Les déclarations publiques de hauts responsables d’Anthropic et d’OpenAI ont mis en évidence une divergence croissante au sein du secteur de l’IA quant à la vitesse et à l’ampleur de la transformation de la main-d’œuvre. S’exprimant lors d’une conférence au Vatican sur l’éthique de l’IA, Chris Olah, cofondateur d’Anthropic, a averti que le remplacement à grande échelle des cols blancs reste un scénario plausible à mesure que les systèmes de pointe progressent. Sam Altman, PDG d’OpenAI, a adopté une position plus prudente, affirmant que l’IA a jusqu’à présent eu un impact plus limité que prévu sur l’emploi des cols blancs débutants, suggérant que les prévisions antérieures d’une disruption rapide ont peut-être surestimé le rythme du changement.

Dans le même temps, la Corée du Sud a mis en place un comité tripartite sur l’IA et le travail, réunissant le gouvernement, les employeurs et les travailleurs afin d’évaluer les implications de l’adoption de l’IA sur l’emploi et de coordonner les réponses politiques. Cette initiative s’inscrit dans un effort plus large visant à anticiper les bouleversements du marché du travail et à structurer le dialogue entre les principaux acteurs économiques. L’Organisation internationale du travail a également averti que l’apprentissage tout au long de la vie serait essentiel pour la future économie de l’IA, soulignant la nécessité d’un développement continu des compétences à mesure que l’automatisation et l’IA redessinent les structures professionnelles.

Socioculturel

Les organisations internationales et les régulateurs redoublent d’efforts pour intégrer des applications éthiques et socialement bénéfiques de l’IA dans les cadres culturels, éducatifs et de gouvernance.

Le Bureau régional de l’UNESCO pour l’Asie de l’Est a lancé un appel mondial à des exemples de bonnes pratiques sur la manière dont l’IA et le design sont utilisés pour soutenir la culture, la créativité, l’éducation, la durabilité et l’inclusion sociale. Cet appel invite les organisations, institutions, praticiens, éducateurs et innovateurs qui utilisent l’IA en association avec des approches de design pour créer des résultats positifs dans les secteurs culturels et créatifs à soumettre leurs contributions.

L’UNESCO a annoncé le lancement de sa méthodologie d’évaluation de l’état de préparation à l’IA au Kazakhstan afin d’évaluer l’état de préparation du pays en matière de gouvernance et de développement de l’IA. Ce cadre vise à aider les pays à aligner leurs approches de gouvernance de l’IA sur la Recommandation de l’UNESCO sur l’éthique de l’IA.

Un programme pilote national d’évaluation éthique et de services liés à l’IA a été lancé en Chine, alors que les autorités s’efforcent de renforcer la surveillance des risques croissants liés aux systèmes d’IA avancés. Cette initiative sera dans un premier temps mise en œuvre dans certaines zones d’innovation provinciales et visera principalement à développer des mécanismes d’évaluation éthique, à mettre en place des comités spécialisés et à créer des centres de services dédiés à l’éthique. Les autorités de régulation prévoient également d’intégrer les processus d’évaluation éthique dans des normes techniques et de renforcer les mécanismes de signalement des problèmes éthiques liés à l’IA.

Gouvernance des contenus

Les gouvernements et les institutions adoptent de plus en plus de règles et de lignes directrices ciblées pour encadrer l’utilisation de l’IA générative dans les médias, la recherche, l’éducation et les services publics.

Le Vietnam a mis en place de nouvelles obligations de divulgation pour les contenus générés et modifiés par l’IA, imposant des mentions claires pour les fichiers audio, les images et les vidéos qui imitent des personnes réelles ou recréent de manière convaincante des événements du monde réel, dans le cadre d’efforts plus larges visant à améliorer la transparence et à faire face aux risques associés à des médias synthétiques de plus en plus réalistes.

La Commission européenne a mis à jour ses lignes directrices « ERA Living Guidelines » sur l’utilisation responsable de l’IA générative dans la recherche, en introduisant de nouvelles recommandations concernant la confidentialité, la propriété intellectuelle et les risques liés à la protection des données dans le cadre de l’utilisation d’outils d’IA externes, tout en soulignant les menaces émergentes telles que les messages cachés conçus pour en manipuler ses systèmes . La Commission européenne a également révisé ses recommandations sur l’utilisation éthique de l’IA et des données dans l’éducation afin d’aider les enseignants et les responsables scolaires à utiliser cette technologie de manière sûre et responsable, conformément aux valeurs de l’UE.

Par ailleurs, les organisations médiatiques suisses ont adopté un code de conduite national pour l’utilisation de l’IA dans le journalisme, renforçant la responsabilité, la transparence et la protection des droits d’auteur.

Health New Zealand a publié des lignes directrices appelant à la prudence dans l’utilisation de l’IA générative et des grands modèles linguistiques dans les établissements de santé, en limitant leur utilisation pour les décisions cliniques et le traitement de données sensibles.

Renforcement des capacités

Le Conseil de l’Union européenne a approuvé des conclusions appelant à une approche éthique, sûre et centrée sur l’humain en matière d’IA dans l’éducation, soulignant que les enseignants doivent rester au cœur du processus d’apprentissage alors que les outils d’IA sont de plus en plus utilisés dans les écoles et les universités. Ces conclusions invitent les gouvernements nationaux à renforcer les compétences des enseignants en matière d’IA et de numérique par le recours à la formation, tout en encourageant le développement et l’utilisation d’outils d’IA spécifiques à l’éducation qui apportent une valeur pédagogique avérée et respectent les exigences en matière de protection des données, de responsabilité et de sensibilisation aux risques.

Parallèlement, la Chine a lancé une plateforme mondiale de services éducatifs en IA afin d’élargir l’accès transfrontalier aux ressources d’apprentissage numériques et de soutenir l’intégration de l’IA dans l’éducation. La plateforme est désormais accessible dans environ 220 pays et régions.

Par ailleurs, plusieurs pays se concentrent sur la culture numérique en matière d’IA au niveau national et sur son adoption par le grand public. Malte a lancé « AI for All », un programme national de sensibilisation à l’IA destiné à aider les citoyens et les résidents à comprendre les capacités, les limites et l’utilisation responsable de l’IA dans la vie quotidienne et au travail, parallèlement à un partenariat offrant l’accès à des outils d’IA avancés. La Grèce a publié « Artificial Intelligence for All », un guide public sur l’IA et les grands modèles linguistiques visant à améliorer la compréhension de base du fonctionnement de ces systèmes et de leur utilisation concrète. Le Centre national australien de l’IA a lancé AI.gov.au, une plateforme centrale destinée à soutenir une adoption sûre et responsable de l’IA au sein des entreprises et des organisations à but non lucratif.

Les gouvernements considèrent de plus en plus l’IA comme un outil structurel au service de la modernisation de l’État. Par exemple, le ministère argentin du Capital humain a lancé l’initiative « Digital Twin », un système basé sur l’IA destiné à simuler les impacts potentiels des politiques sociales avant leur mise en œuvre. Ce système est conçu pour modéliser des scénarios liés à des domaines tels que la pauvreté, les subventions et le développement du capital humain à l’aide d’ensembles de données à grande échelle. Selon les responsables, ce projet s’inscrit dans le cadre d’efforts plus larges visant à utiliser l’analyse de données et des outils prédictifs dans la planification des politiques publiques. La Nouvelle-Zélande a présenté ses projets pour une réforme majeure du secteur public qui place l’IA au cœur de la modernisation de l’administration et de la prestation de services. Le Kazakhstan a examiné des propositions visant à étendre le déploiement de l’IA à tous les secteurs dans le cadre de son programme de transformation numérique et prévoit d’accroître la capacité informatique nationale, d’améliorer les systèmes d’allocation des ressources afin d’éviter les goulots d’étranglement au niveau des infrastructures, et de renforcer l’infrastructure de données grâce à des initiatives telles que la « Data Center Valley » à Pavlodar, parallèlement à une mise à jour de la réglementation en matière de gouvernance du cloud.Les pays investissent également dans le renforcement de leurs capacités structurelles. Le Canada renforce ses capacités nationales en photonique et en semi-conducteurs grâce à la commercialisation du Centre canadien de fabrication en photonique du Conseil national de recherches. En Europe, l’Université de Belgrade, en Serbie, a annoncé le lancement de SAIFA, une initiative d’infrastructure d’IA soutenue par l’entreprise commune EuroHPC, visant à élargir l’accès au calcul haute performance pour la recherche, l’industrie et l’administration publique.

Magnifica humanitas : la dignité humaine à l’ère de l’IA

Le pape Léon XIV a publié Magnifica Humanitas, une encyclique novatrice qui expose la position de l’Église catholique sur l’intelligence artificielle.

Pour en saisir toute la portée, il convient de rappeler qu’une encyclique est une lettre pastorale rédigée par le pape à l’intention de l’ensemble de l’Église catholique romaine sur des questions de doctrine, de morale ou de discipline. Elle s’adresse également au-delà de l’Église, influençant souvent les débats éthiques et politiques mondiaux sur des questions majeures telles que les inégalités, la guerre, l’environnement et la technologie.

Lorsque des détracteurs s’opposèrent à la publication par le pape Léon XIII de Rerum Novarum sur la société, l’économie et la politique, le pape fit remarquer que la proclamation de l’Évangile ne pouvait ignorer la vie concrète des gens. C’est cette même logique qui sous-tend Magnifica Humanitas.

Ancrée dans des millénaires d’histoire, Magnifica Humanitas offre un antidote important au discours sur l’IA présenté comme une fatalité : la course ne peut être arrêtée, le marché ne peut être ralenti, la concurrence géopolitique ne peut être évitée, et les humains doivent simplement suivre. L’encyclique rejette cette fatalité. Elle insiste sur le fait que l’avenir technologique doit être défini par nos choix.

L’encyclique définit la gouvernance de l’IA à travers deux métaphores contrastées : la tour de Babel et les murs de la Nouvelle Jérusalem. Babel incarne l’ambition technologique dépourvue d’humilité, qui s’élève vers le ciel par l’ambition, le contrôle et l’exaltation de soi, pour finalement n’engendrer que fragmentation, confusion et effondrement. La métaphore des murs de Jérusalem offre une vision différente. Elle se construit pièce par pièce, grâce à la coopération, à la solidarité, au dialogue et à la responsabilité. De plus, elle requiert la diplomatie plutôt que la domination, la patience plutôt que l’accélération, et le bien commun plutôt que la course à la suprématie.

En fin de compte, « Magnifica Humanitas » se résume à un choix entre deux métaphores : Babel et Jérusalem. L’encyclique nous met en garde contre le risque de sombrer, comme des somnambules, dans Babel, et nous offre des repères pour atteindre Jérusalem en puisant dans les forces cognitives, émotionnelles et spirituelles qui font de nous des êtres humains.

Comment réparer le « système d’exploitation » de l’humanité. L’encyclique présente le « système d’exploitation » de l’humanité comme ancré dans les traditions de l’Âge axial qui ont défini l’identité morale, rationnelle et spirituelle de l’homme, puis affinées par la pensée des Lumières. Elle met en garde contre le fait que le transhumanisme et le posthumanisme, portés par l’IA et la bio-ingénierie, risquent de redéfinir les humains comme des entités à optimiser plutôt que comme des êtres dotés d’une dignité intrinsèque. Magnifica Humanitas se concentre sur ce dilemme central, en écartant tous les artifices des discours technologiques, commerciaux et autres qui dominent notre espace de communication. Elle appelle également à revisiter le cœur du « système d’exploitation » de l’humanité, centré sur la vie et la dignité des êtres humains, en soutenant que les humains ne devraient pas être « optimisés » de la même manière que les technologies.

L’encyclique met en garde contre le danger de considérer les systèmes d’IA comme neutres et objectifs, soulignant que la technologie prend les caractéristiques de ceux qui la conçoivent, la financent, la réglementent et l’utilisent.

Clarté sur la gouvernance et la responsabilité en matière d’IA. En ce qui concerne la gouvernance de l’IA, l’encyclique apporte des éclaircissements. Elle remet en question le paradigme technocratique, dans lequel la gouvernance est traitée comme un exercice d’expertise axé principalement sur les normes, la gestion des risques et l’efficacité. Au contraire, l’encyclique soutient que la gouvernance de l’IA doit partir de la dignité humaine et du bien commun. Les décisions concernant l’IA devraient être prises par les personnes et les communautés directement concernées. Les communautés ne devraient pas se contenter d’être consultées après que les règles et les plateformes ont été définies ailleurs. Elles doivent être en mesure d’influencer, de remettre en question et de corriger les systèmes qui ont un impact sur leur vie. Cela fait fortement écho à la tradition multipartite de la gouvernance de l’internet et remet en cause à la fois le monopole des entreprises et le contrôle centré sur l’État.

Un regard neuf sur les droits de l’Homme. L’encyclique met en évidence un paradoxe de notre époque : les droits de l’Homme sont largement proclamés de manière formelle, mais rapidement érodés, entre autres, par le progrès technologique. À travers le profilage, la manipulation et l’optimisation technologique globale, les personnes sont de plus en plus traitées comme des points de données et des éléments de modèles algorithmiques. Par conséquent, l’une des contributions les plus essentielles de l’encyclique est son ancrage renouvelé des droits de l’Homme pour protéger notre humanité fondamentale à l’ère de l’IA.

Désarmer l’IA. Un concept marquant de l’encyclique est l’appel à « désarmer l’IA ». Cela ne se limite pas à la réglementation des armes autonomes ou des systèmes militaires, bien que ces questions soient urgentes. Il s’agit d’un appel plus large au désarmement cognitif, économique et politique. L’IA devient un instrument de rivalité géopolitique, de domination commerciale, de surveillance, de propagande et de contrôle social. L’encyclique remet en cause l’idée selon laquelle la course à l’IA est inévitable. Elle appelle à ce que l’IA soit développée comme un outil au service de l’humanité plutôt que comme une arme de pouvoir et de guerre.

L’esclavage et le colonialisme à l’ère de l’IA. Le colonialisme numérique est une autre préoccupation de l’encyclique. Les puissances coloniales n’ont plus besoin de contrôler des territoires. Elles peuvent s’approprier des données, influencer les marchés, contrôler les infrastructures et extraire de la valeur de vies humaines transformées en informations exploitables. Un ordre numérique véritablement postcolonial rendrait l’autonomie aux individus et aux communautés. Il donnerait aux personnes non seulement l’accès à leurs données, mais aussi un contrôle significatif sur la manière dont ces données sont utilisées, par qui et au profit de qui.

Comment faire face aux risques liés à l’IA. Magnifica Humanitas offre une analyse éclairée et percutante des risques liés à l’IA. Concernant le risque existentiel, elle s’écarte des discours centrés sur la prise de pouvoir par l’IA générale (AGI) ou la super-intelligence, arguant au contraire que les risques existentiels peuvent émerger plus progressivement, et pas nécessairement de la technologie elle-même, mais de ceux qui possèdent et exploitent les systèmes d’IA. Concernant le risque d’exclusion, il met en garde contre la concentration du pouvoir entre les mains de quelques-uns et l’exclusion de nombreux autres, les monopoles prenant des formes épistémiques, économiques et politiques. Il souligne comment l’opacité et l’absence de contrôle public peuvent engendrer des dépendances, des exclusions, des manipulations et des inégalités, notamment par le contrôle de la visibilité, de l’amplification et de l’influence comportementale. Il examine également les risques existants en matière d’emploi, d’économie, de désinformation et d’éducation.

Langue et culture à l’ère de l’IA. Le Pape met en garde contre le fait que la violence commence souvent par le langage : les guerres sont précédées par des guerres de mots. À cet égard, l’IA joue un rôle crucial dans l’influence de la culture en tant que génératrice de textes, de vidéos et d’autres artefacts de communication. L’encyclique appelle donc à accorder une attention renouvelée à la vérité, au dialogue et à l’écologie morale du langage. L’IA présente les risques principaux de générer des récits synthétiques, une persuasion superficielle et une distorsion automatisée. Le défi ne réside pas seulement dans la désinformation. Il s’agit de l’érosion progressive de la réalité partagée.

L’éducation à l’ère de l’instantanéité. Magnifica Humanitas accorde une grande importance à l’éducation à l’ère de l’IA, conformément à la longue tradition doctrinale de l’Église catholique. L’encyclique met en évidence une tension fondamentale entre l’accès rapide à l’information à l’ère numérique et la nature intrinsèquement lente de l’apprentissage. L’information peut être transmise instantanément. La connaissance, et plus encore la sagesse, exigent beaucoup de temps. Or, les écoles et les universités ne sont pas préparées à gérer cette tension entre la technologie de l’IA et l’apprentissage humain.

Impact de l’IA sur la nature et l’environnement. L’encyclique nous rappelle également que rien dans le monde numérique n’est immatériel ou magique. L’IA dépend d’infrastructures physiques : centres de données, réseaux énergétiques, minéraux, eau, appareils, câbles et chaînes d’approvisionnement. Les systèmes d’IA actuels nécessitent d’énormes quantités d’énergie et d’eau et exercent une pression croissante sur les ressources naturelles. Le coût environnemental de l’IA n’est donc pas un élément extérieur au débat. Une technologie qui prétend faire progresser l’humanité ne peut être évaluée uniquement en fonction de sa rapidité, de sa précision ou de sa rentabilité. Elle doit également être jugée à l’aune de son impact sur la planète, sur les communautés vulnérables et sur les générations futures.

Pourquoi Magnifica Humanitas est importante. L’importance historique de Magnifica Humanitas réside dans sa tentative de faire passer le débat sur l’IA de la gestion technique à une réflexion civilisationnelle. Elle remet en cause la concentration du pouvoir entre les mains de quelques entreprises. Elle remet en question les discours transhumanistes et posthumanistes qui traitent la personne humaine comme obsolète ou perfectible. Elle insiste sur le fait que la gouvernance de l’IA doit être fondée sur la dignité, la subsidiarité, la solidarité, la justice et le bien commun. Elle offre de nouvelles perspectives sur les risques d’esclavage numérique, de colonialisme, de militarisation, de manipulation et de régression anthropologique.

Plus important encore, il rétablit l’autonomie morale. Métaphoriquement parlant, nous ne sommes pas condamnés à construire une tour de Babel. Nous pouvons choisir de bâtir les murs de la nouvelle Jérusalem.Ce texte est une adaptation de l’analyse du Dr Jovan Kurbalija sur Magnifica Humanitas, qui sera développée dans les prochains jours. Pour recevoir une analyse approfondie, vous pouvez vous inscrire ici.

Au-delà de l’IA : les développements qui ont fait sensation en mai

Technologies

La Norvège a rejoint l’initiative Pax Silica, qui se concentre sur la sécurité des chaînes d’approvisionnement en IA, en semi-conducteurs et en matières premières critiques. Les responsables norvégiens ont déclaré que cette participation pourrait améliorer l’accès au marché pour les entreprises nationales opérant dans les secteurs technologiques de pointe et renforcer la coopération en matière de sécurité économique avec des partenaires stratégiques.

La Commission européenne a adopté une proposition concernant la future attribution de la bande de fréquences de 2 GHz pour les services mobiles par satellite de l’UE au-delà de 2027. Ce spectre est de plus en plus considéré comme un atout stratégique tant pour la connectivité commerciale que pour les communications liées à la défense, en particulier pour les services satellitaires de communication directe entre appareils (D2D) et les infrastructures de communication critiques. Selon cette proposition, un tiers du spectre serait réservé à un usage gouvernemental, sécuritaire et militaire, dans le cadre du programme de connectivité sécurisée IRIS² de l’UE. Les deux tiers restants seraient destinés aux services commerciaux, mais la moitié de cette capacité serait réservée spécifiquement aux opérateurs de l’UE entrant sur le marché, tandis que le reste resterait ouvert aux entreprises tant de l’UE que hors UE.

Cybersécurité

L’UE a adopté des modèles communs pour la notification des incidents de cybersécurité à l’échelle de l’Union afin de réduire les charges administratives et de simplifier la mise en conformité pour les entreprises tenues de signaler les incidents de cybersécurité en vertu de la directive NIS2. La Commission prévoit désormais d’adopter ces modèles par le biais d’un acte d’exécution, ce qui les rendrait obligatoires pour tous les États membres.

Le Conseil de l’Union européenne a prolongé jusqu’au 18 mai 2027 les mesures restrictives à l’encontre des personnes et entités impliquées dans des cyberattaques menaçant l’UE et ses États membres. Ce cadre permet à l’UE d’imposer des sanctions ciblées à l’encontre de personnes ou d’entités impliquées dans des cyberattaques significatives constituant une menace extérieure pour l’Union ou ses États membres. Des mesures peuvent également être imposées en réponse à des cyberattaques contre des pays tiers ou des organisations internationales, lorsqu’elles soutiennent les objectifs de la politique étrangère et de sécurité commune.

Le gouvernement fédéral allemand a approuvé un projet de loi visant à renforcer les capacités de cyberdéfense de trois agences fédérales : l’Office fédéral pour la sécurité de l’information (BSI), l’Office fédéral de police criminelle (BKA) et la police fédérale (Bundespolizei). Les autorités seraient en mesure de bloquer ou de perturber les logiciels et les infrastructures de serveurs utilisés dans le cadre de cyberattaques, y compris les systèmes situés en dehors de l’Allemagne. Le BSI se verrait également conférer des pouvoirs élargis pour collecter, stocker et analyser des données afin de détecter des activités indiquant la préparation d’une attaque.

YouTube, Snap, TikTok et Meta ont accepté de conclure un accord à l’amiable dans le cadre d’un procès historique intenté par le district scolaire du comté de Breathitt, dans le Kentucky, qui les accusait d’avoir conçu des systèmes algorithmiques et des fonctionnalités — telles que le défilement infini et les boucles de recommandation axées sur l’engagement — qui favorisent une utilisation compulsive chez les jeunes utilisateurs et imposent des charges financières et pédagogiques supplémentaires aux établissements scolaires. Les termes de l’accord n’ont pas été divulgués. Le procès devait s’ouvrir le 12 juin, mais il n’aura finalement pas lieu.

Parallèlement, l’Ofcom britannique a indiqué que la quasi-totalité des jeunes âgés de 8 à 17 ans au Royaume-Uni sont connectés à Internet (99 %), ce qui le conforte dans sa volonté de renforcer la responsabilité des plateformes. L’Ofcom souhaite que la sécurité des enfants soit intégrée dès le départ dans la conception des produits, et que les régulateurs soient informés à l’avance des nouvelles fonctionnalités afin d’évaluer les risques potentiels. Toutefois, l’autorité de régulation reste préoccupée par le fait que certaines plateformes ne font pas assez d’efforts pour rendre les flux plus sûrs et doute que les politiques fixant l’âge minimum à 13 ans empêchent efficacement l’accès des mineurs.

Le Commissariat à la protection de la vie privée du Canada a publié des lignes directrices sur la manière dont les organisations doivent évaluer et mettre en œuvre des outils de vérification de l’âge pour les sites Web et les services en ligne. Le CPVP précise que la vérification de l’âge ne doit être utilisée que lorsqu’il existe une obligation légale claire ou un risque avéré de préjudice pour les enfants. Il souligne que les organisations doivent déterminer si des mesures alternatives moins intrusives pourraient permettre de contrer ces risques avant d’adopter de tels systèmes.

La Commission des communications et du multimédia de Malaisie (MCMC) a publié le Code de protection de l’enfance et le Code d’atténuation des risques en vertu de la loi de 2025 sur la sécurité en ligne. Les nouvelles règles exigent des mesures de protection plus strictes pour les jeunes utilisateurs sur les plateformes en ligne, notamment des contrôles d’âge plus rigoureux et des obligations renforcées en matière de gouvernance des contenus pour les fournisseurs de services. La Malaisie a également commencé à interdire aux enfants de moins de 16 ans de créer des comptes sur les réseaux sociaux. Les grandes plateformes telles que Facebook, Instagram, TikTok et YouTube sont tenues de vérifier l’âge des utilisateurs par rapport aux registres gouvernementaux, sous peine de sanctions pouvant atteindre 10 millions de ringgits (environ 2,5 millions de dollars américains) en cas de non-respect. Les utilisateurs existants feront l’objet de contrôles de vérification d’âge pendant une période de transition de six mois.

Par ailleurs, la Suède envisage également d’interdire l’accès aux réseaux sociaux aux jeunes utilisateurs. Dans un rapport intermédiaire soumis au ministre des Affaires sociales, les enquêteurs gouvernementaux nommés l’année dernière ont recommandé d’interdire aux moins de 15 ans l’accès aux plateformes permettant aux utilisateurs de se découvrir, d’entrer en contact et de communiquer entre eux, ou sur lesquelles du contenu peut être partagé ou découvert.

Les ministres du G7 chargés du numérique et de la technologie se sont mis d’accord sur un ensemble de principes communs définissant un espace numérique plus sûr et plus sécurisé pour les mineurs. Les ministres mettent l’accent sur une vérification d’âge efficace, des fonctionnalités de sécurité intégrées dès la conception des plateformes, notamment des protections par défaut, des contrôles parentaux et des systèmes de recommandation limitant l’exposition à des contenus préjudiciables ou addictifs. Ils appellent également à une prévention et une répression rigoureuses contre les préjudices graves tels que les contenus pédopornographiques et les images intimes non consenties, y compris celles générées par l’IA, ainsi qu’à un meilleur soutien aux victimes. Parallèlement, ils mettent l’accent sur la mise à disposition des parents d’outils de contrôle faciles à utiliser, le renforcement des compétences numériques et en matière d’IA chez les mineurs, l’amélioration de la transparence et du contrôle des utilisateurs sur leurs données, et la garantie que les plateformes évaluent, atténuent et signalent systématiquement les risques. Ces principes soulignent également la nécessité d’une action coordonnée entre les gouvernements, l’industrie, les chercheurs, les éducateurs et la société civile.

Droits de l’Homme

Depuis le 8 mai, la messagerie chiffrée de bout en bout sur Instagram n’est officiellement plus disponible. Meta a désactivé cette fonctionnalité à l’échelle mondiale, renonçant ainsi à son projet d’étendre cette technologie de protection de la vie privée à l’ensemble de la plateforme, après avoir passé des années à promouvoir la communication chiffrée comme l’avenir de la messagerie.

Apple et Meta s’opposent au projet de loi C-22 proposé par le Canada, qui, selon eux, pourrait contraindre les entreprises à affaiblir le chiffrement ou à intégrer des mécanismes d’accès gouvernemental dans leurs produits. Les autorités canadiennes affirment que ce projet de loi aiderait les forces de l’ordre à réagir plus rapidement aux menaces pour la sécurité.

Après 88 jours de coupure à l’échelle nationale, la connectivité Internet a été partiellement rétablie en Iran, mettant fin à l’une des plus longues coupures totales de l’histoire moderne. Cette coupure avait été imposée suite à l’escalade du conflit et justifiée par les autorités pour des raisons de sécurité. NetBlocks a indiqué que la connectivité avait largement été rétablie, mais que les indicateurs montrent que les utilisateurs sont toujours confrontés à un filtrage intensif, notamment de nouvelles restrictions sur la messagerie et les boutiques d’applications.

Le président russe Vladimir Poutine a donné pour instruction au gouvernement et au FSB de garantir un accès ininterrompu aux services médicaux, d’information et de paiement essentiels pendant les périodes de restriction de l’Internet mobile en Russie. Le Premier ministre Mikhaïl Michoustine et le directeur du FSB Alexandre Bortnikov doivent rendre compte de leurs progrès d’ici le 1er juillet.

Juridique

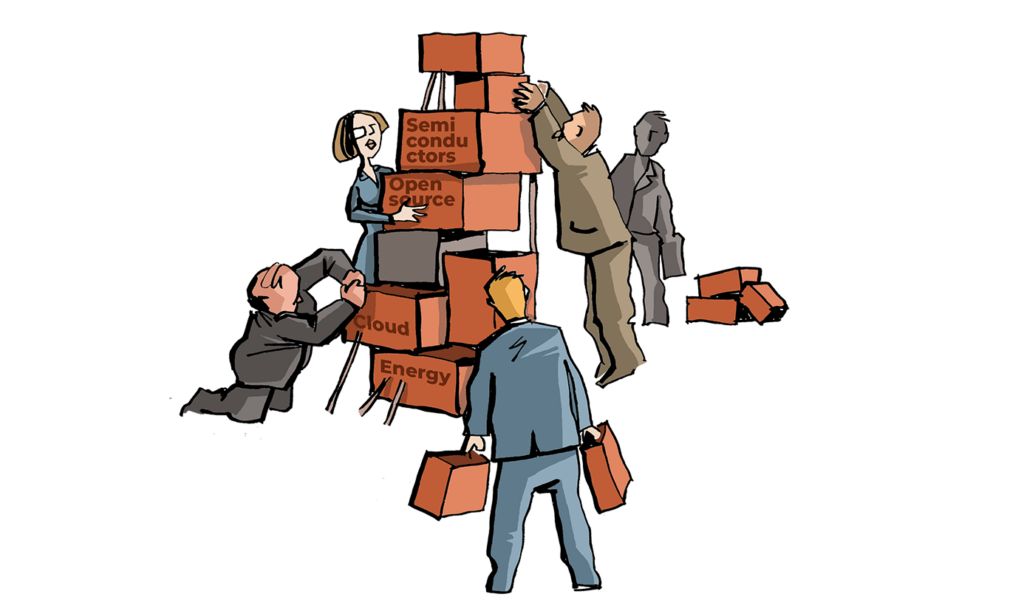

La Commission européenne a présenté un paquet de mesures sur la souveraineté technologique européenne visant à renforcer les capacités de l’Europe dans les domaines des semi-conducteurs, de l’IA, des infrastructures cloud et des technologies open source. Ce paquet comprend deux propositions législatives, la loi sur les puces 2.0 et la loi sur le développement du cloud et de l’IA, ainsi qu’une stratégie open source et une feuille de route stratégique pour la numérisation et l’IA dans le secteur de l’énergie. La proposition de « Chips Act 2.0 » vise à développer les capacités de l’Europe en matière de semi-conducteurs et à soutenir la prochaine génération d’infrastructures informatiques nécessaires à l’IA et à d’autres technologies de pointe. La proposition de « Cloud and AI Development Act » se concentre sur le renforcement des infrastructures européennes de cloud et d’IA, y compris la capacité des centres de données, alors que la demande en ressources informatiques augmente avec la généralisation de l’IA. Parallèlement à ces propositions législatives, la Commission a présenté une stratégie en matière de logiciels libres visant à soutenir l’écosystème logiciel européen et à réduire la dépendance vis-à-vis des technologies fermées ou contrôlées de l’extérieur. La feuille de route stratégique pour la numérisation et l’IA dans le secteur de l’énergie vise à soutenir l’utilisation d’outils numériques et de l’IA dans ce secteur tout en contribuant à un avenir numérique plus durable.

La Chine a mis à jour ses règles de protection des secrets d’affaires afin d’y inclure explicitement les actifs numériques tels que les données, les algorithmes, le code logiciel et les programmes informatiques. Ce cadre renforce les exigences en matière de mesures de protection des entreprises, notamment les accords de confidentialité, la formation des employés, les contrôles d’accès, le chiffrement et les pratiques de gestion des données. Il introduit également des dispositions détaillées pour les environnements de travail numériques et à distance, encourageant des outils tels que l’accès basé sur des autorisations, le masquage des données et la journalisation des activités, tout en interdisant explicitement les méthodes illicites telles que le piratage, la fraude et l’accès non autorisé aux systèmes pour obtenir des secrets d’affaires. Ces règles étendent en outre la protection aux infractions commises à l’étranger qui affectent le marché intérieur chinois ou les droits des entreprises chinoises.

Meta Platforms fait face à une pression juridique et réglementaire croissante tant aux États-Unis qu’en Europe, suite à des allégations selon lesquelles ses plateformes de réseaux sociaux contribueraient à la dépendance des jeunes et à des problèmes de santé mentale. Au Nouveau-Mexique, l’État réclame 3,7 milliards de dollars et demande au tribunal de déclarer Meta comme une nuisance publique. Le procès allègue que Facebook, Instagram et WhatsApp ont été conçus de manière à encourager un comportement addictif chez les mineurs. Il affirme également que ces plateformes n’ont pas protégé de manière adéquate les jeunes utilisateurs contre les contenus préjudiciables et l’exploitation. L’État demande des changements majeurs aux plateformes, notamment la vérification de l’âge et des restrictions sur des fonctionnalités telles que la lecture automatique et le défilement infini pour les mineurs. Meta affirme que l’affaire concerne des utilisateurs individuels, plutôt que le préjudice causé au public dans son ensemble.

Meta tente également de faire annuler le verdict d’un jury californien qui a jugé l’entreprise négligente dans la conception de ses plateformes et a accordé des dommages-intérêts à une jeune plaignante qui affirmait que l’utilisation des réseaux sociaux avait contribué à sa dépression. Meta fait valoir que ces plaintes sont irrecevables en vertu de l’article 230 du Communications Decency Act et que les préjudices allégués étaient liés au contenu en ligne plutôt qu’aux fonctionnalités de conception des plateformes.

À Milan, des familles et le MOIGE ont intenté une action contre Meta et TikTok pour manquement présumé à l’obligation de protéger les mineurs sur les plateformes de réseaux sociaux.

Le procureur général du Texas a intenté une action en justice contre WhatsApp et sa société mère, Meta Platforms Inc, alléguant que les utilisateurs auraient reçu des informations trompeuses sur la portée et l’efficacité des protections offertes par le chiffrement de bout en bout de WhatsApp. La plainte allègue que Meta et WhatsApp ont conservé un accès plus large à certaines communications et métadonnées des utilisateurs que ce que ces derniers auraient pu comprendre.

Le Texas a poursuivi Netflix pour avoir prétendument collecté des données d’utilisateurs sans leur consentement, notamment leurs habitudes de visionnage, et pour avoir transformé l’activité des utilisateurs en données monétisées. Le procureur général fait également valoir que des fonctionnalités telles que la lecture automatique ont été conçues pour accroître l’engagement, en particulier chez les mineurs, et que ces pratiques sont en contradiction avec les déclarations publiques de Netflix selon lesquelles la plateforme serait sans publicité et adaptée à toute la famille.

La Cour de justice de l’Union européenne a confirmé une décision italienne obligeant Meta à indemniser les éditeurs pour les extraits d’actualités, intensifiant ainsi les litiges en matière de droits d’auteur concernant la réutilisation de contenus et les données d’entraînement de l’IA.

La Cour de cassation française a rejeté le recours d’Amazon contre un prix minimum de livraison de livres destiné à protéger les librairies indépendantes contre la domination des plateformes mondiales.

La Comisiun na Mean a ouvert deux enquêtes sur Meta en vertu de la loi sur les services numériques (DSA), afin de déterminer si Facebook et Instagram permettent aux utilisateurs de choisir effectivement des flux de recommandations qui ne sont pas basés sur le profilage de leurs données personnelles. L’autorité de régulation a également averti que les très grandes plateformes en ligne (VLOP) doivent veiller à ce que les utilisateurs puissent exercer leurs droits en vertu de la DSA sans manipulation ni obstacles inutiles.

Un jury fédéral de Californie s’est prononcé en faveur d’OpenAI, de Sam Altman et de Greg Brockman dans le procès intenté par Elon Musk, qui alléguait que la société avait abandonné sa mission initiale à but non lucratif après être passée à une structure à but lucratif. Le tribunal a accepté l’argument d’OpenAI selon lequel Musk était depuis longtemps au courant des discussions concernant la restructuration, concluant que les plaintes avaient été déposées hors des délais légaux applicables et rejetant l’affaire à la suite d’un verdict consultatif du jury.

La Commission européenne a infligé une amende de 200 millions d’euros à Temu après avoir constaté que l’évaluation des risques de Temu pour 2024 ne répondait pas aux normes énoncées dans la loi sur les services numériques (DSA). La Commission a en outre estimé que Temu n’avait pas évalué de manière adéquate comment la conception de sa plateforme, en particulier les systèmes de recommandation et la promotion par des influenceurs, pouvait contribuer à l’amplification et à la diffusion de produits illégaux.

Socioculturel

L’Ofcom a annoncé une recommandation visant à renforcer les protections contre l’utilisation abusive d’images intimes illégales en ligne. L’autorité de régulation britannique a déclaré qu’elle mettait à jour ses codes relatifs aux contenus illégaux afin de recommander à certaines plateformes en ligne d’utiliser des technologies de détection automatisée pour identifier ces images, y compris les deepfakes explicites générés par l’IA et le partage d’images sans consentement. La recommandation devrait entrer en vigueur à l’automne 2026, sous réserve de l’approbation du Parlement.

De même, la Commission fédérale du commerce des États-Unis (FTC) a publié des lignes directrices à l’intention des plateformes en ligne concernant le respect de l’article 3 de la loi « Take It Down Act », entrée en vigueur le 19 mai 2026, qui impose aux plateformes concernées de retirer les photos ou vidéos intimes non consenties dans les 48 heures suivant la réception d’une demande valide. Les plateformes concernées doivent fournir des informations claires, bien visibles et rédigées dans un langage simple sur la manière dont les personnes peuvent soumettre des demandes de retrait concernant des photos ou vidéos intimes partagées sans consentement. Les plateformes doivent rendre la procédure facile à utiliser, y compris pour les personnes qui ne disposent pas d’un compte sur le service. La FTC encourage également les plateformes à contribuer à empêcher la diffusion des images supprimées, notamment grâce à la technologie de hachage et, le cas échéant, en partageant les hachages avec des services tels que le service « Take It Down » du Centre national pour les enfants disparus et exploités (National Center for Missing and Exploited Children) ou StopNCII.org.

Le ministère des Technologies de l’information et des communications de Papouasie-Nouvelle-Guinée a commencé à rédiger des instructions et un projet de loi sur l’identité numérique et les identifiants vérifiables, à la suite de l’adoption de la politique nationale en matière d’identité numérique. Le projet de loi soutiendra le déploiement national de SevisPass, SevisWallet, SevisDEx et d’autres identifiants vérifiables approuvés. SevisWallet permettra aux citoyens d’enregistrer, de conserver et de présenter des identifiants numériques fiables, tandis que SevisDEx permettra un échange de données sécurisé et fondé sur le consentement.

Des citoyens néerlandais et des défenseurs de la vie privée ont contesté le projet d’acquisition de Solvinity, la société hébergeant le système d’identité DigiD des Pays-Bas, par la société américaine Kyndryl. Les détracteurs ont averti que le fait de laisser une entreprise américaine approcher une infrastructure aussi sensible pourrait exposer les données des citoyens néerlandais à une juridiction étrangère et à des risques de surveillance en vertu des lois américaines. Au début du mois de mai, le tribunal de district de La Haye a rejeté une tentative de trois citoyens néerlandais visant à empêcher le gouvernement de renouveler son contrat avec Solvinity. Cependant, le Bureau d’évaluation des investissements (BTI) a conseillé à Willemijn Aerdts, ministre de l’Économie numérique et de la Souveraineté des Pays-Bas, d’imposer une interdiction totale de cette acquisition, car elle pourrait présenter un risque pour l’intérêt public. La ministre a suivi ce conseil, et le gouvernement a bloqué la vente.

L’UNESCO et le Pakistan ont lancé « Digital Citizens for Peace », une initiative d’éducation aux médias visant à lutter contre les discours de haine et la désinformation en formant de jeunes journalistes et créateurs de contenu. Grâce à des camps immersifs d’éducation aux médias et à l’information, des programmes de mentorat et des kits pédagogiques en libre accès, cette initiative vise à renforcer l’engagement numérique responsable et à encourager la création de contenu factuel à travers le pays.

Développement

Le Secrétariat de la Zone de libre-échange continentale africaine (ZLECA) a sélectionné le Kenya, le Maroc et le Nigeria comme premiers pays à mettre en œuvre une initiative d’infrastructure publique numérique (DPI) destinée à soutenir le commerce transfrontalier. Cette initiative, baptisée « Africa Digital Access and Public Infrastructure for Trade » (ADAPT), vise à relier l’identité numérique, l’échange de données fiables et les systèmes de paiement interopérables afin de réduire les frictions dans le commerce intra-africain.

L’Australie a mis en place un cadre national pour les normes de santé numérique afin d’améliorer l’interopérabilité et la cohérence entre les systèmes de santé. Ce cadre remédie à la fragmentation causée par des normes de santé numérique développées de manière indépendante. Il fournit également des orientations destinées à soutenir la coordination entre les agences gouvernementales, les prestataires de soins de santé et les acteurs du secteur. Le cadre soutient également l’utilisation de normes terminologiques cliniques reconnues au niveau international et les initiatives de formation associées.

Le Parlement grec a approuvé une loi qui accélère l’interopérabilité entre les systèmes d’information publics, établit une plateforme de services numériques unifiée sur gov.gr, met en place un registre des entités autorisées à utiliser les services gouvernementaux de vérification des données et renforce la cybersécurité grâce à un blocage plus rapide des sites de phishing et à un registre national des domaines malveillants.

Économie

L’OCDE a publié une étude sur le commerce numérique de l’Association des nations de l’Asie du Sud-Est (ASEAN), qui examine la croissance régionale du commerce numérique et les défis réglementaires qui y sont liés. L’OCDE a déclaré que l’ASEAN bénéficie de l’ouverture commerciale, de l’adoption croissante du numérique et de l’évolution des initiatives politiques régionales. Le rapport a noté que la participation inégale et la fragmentation des réglementations nationales pourraient limiter la poursuite de l’intégration du commerce numérique dans la région.

L’étude a identifié des obstacles, notamment des restrictions affectant les flux transfrontaliers de données, les télécommunications, les services numériques et les systèmes de facilitation des échanges. L’OCDE a souligné l’importance de l’harmonisation réglementaire et des progrès vers des systèmes de commerce sans papier. Le rapport aborde également les opportunités liées à l’adoption de l’IA, notamment les réformes concernant les droits de douane, les flux de données et la réglementation des services numériques. Ces conclusions soulignent l’importance de réformes coordonnées pour renforcer le rôle de l’ASEAN dans l’économie numérique mondiale.

Le Pakistan a promulgué la loi de 2026 sur les actifs virtuels (Virtual Assets Act 2026), établissant ainsi un fondement juridique permanent pour l’Autorité pakistanaise de régulation des actifs virtuels (PVARA). Cette mesure officialise la supervision du secteur des actifs numériques en pleine croissance du pays, qui fonctionnait auparavant selon des règles temporaires introduites en 2025. Dans le cadre de ce nouveau dispositif, la PVARA est chargée d’octroyer des licences, de réglementer et de superviser les actifs virtuels et les prestataires de services d’actifs virtuels opérant au Pakistan. La loi confère à l’autorité le pouvoir de délivrer, de suspendre et de révoquer des licences, tandis que les opérations non autorisées s’exposent à des amendes et des sanctions pénales.

La Géorgie s’apprête à lancer le GEL₮, une cryptomonnaie indexé sur le lari géorgien. Selon les responsables, cette initiative vise à soutenir une infrastructure de paiement basée sur la blockchain dans le cadre d’un dispositif réglementaire dédié aux cryptomonnaies. Les responsables ont indiqué que ce projet pourrait favoriser l’accélération des paiements, les transferts transfrontaliers, le développement des technologies financières et les services financiers programmables.La Banque centrale du Brésil a introduit une nouvelle exigence pour les prestataires de services d’actifs virtuels souhaitant obtenir l’autorisation d’opérer dans le pays. À compter du 1er juin 2026, les entreprises souhaitant exercer en tant que « sociedades prestadoras de serviços de ativos virtuais » (SPSAV) devront présenter un rapport d’assurance raisonnable émis par un auditeur indépendant enregistré auprès de l’autorité de régulation des marchés financiers brésilienne, la Comissão de Valores Mobiliários. Cette exigence d’audit vise à évaluer si les candidats disposent de structures de conformité et de contrôle adéquates. Les examens porteront principalement sur les mesures de lutte contre le blanchiment d’argent et le financement du terrorisme, notamment les dispositifs de gouvernance, les procédures de vérification des clients, les contrôles internes des risques et les mécanismes visant à prévenir l’utilisation abusive des services d’actifs virtuels.

Xi reçoit Trump et Poutine en l’espace de deux semaines alors que la diplomatie technologique occupe le devant de la scène

Alors que de nombreux internautes se sont amusés à comparer les visites de Donald Trump et de Vladimir Poutine en Chine jusque dans les moindres détails, y compris les prestations des enfants lors des cérémonies officielles, nous nous concentrerons ici sur les questions technologiques abordées lors de ces rencontres, avec seulement un bref aperçu des similitudes et différences entre les deux séries d’entretiens.

Le sommet Trump-Xi témoigne d’un certain malaise, mais laisse entrevoir de vagues progrès

Le commerce et la technologie figuraient parmi les principaux thèmes censés dominer le sommet Trump-Xi du 13 au 15 mai 2026 à Pékin, la première visite d’État d’un président américain en Chine depuis 8 ans.

Une pléiade de PDG accompagnait Trump lors de ce voyage, notamment Elon Musk de Tesla, Jensen Huang de Nvidia, Tim Cook d’Apple, Larry Culp de GE Aerospace, Kelly Ortberg de Boeing, Dina Powell McCormick de Meta, Larry Fink de BlackRock, Stephen Schwarzman de Blackstone, Sanjay Mehrotra de Micron, Michael Miebach de Mastercard, Cristiano Amon de Qualcomm et Ryan McInerney de Visa.

Mais même si le public est assuré que des progrès ont été réalisés, les détails restent rares.

En matière de technologie, l’IA devait être au cœur des discussions, des informations publiées à l’approche du sommet laissant entendre que les États-Unis et la Chine envisageaient d’entamer des discussions officielles sur l’IA. « Les deux superpuissances de l’IA vont entamer le dialogue. Nous allons établir un protocole sur la manière de mettre en œuvre les meilleures pratiques en matière d’IA afin de nous assurer que des acteurs non étatiques ne mettent pas la main sur ces modèles », a déclaré le secrétaire au Trésor américain Scott Bessent à CNBC.

Les puces nécessaires à l’IA font bien sûr partie intégrante de cette histoire. Les États-Unis ont tenté de freiner le développement de l’IA en Chine en limitant les ventes de puces de pointe, ce qui a pris au piège la société Nvidia de Jensen Huang et Huang lui-même. Pourtant, selon certaines informations, les États-Unis auraient autorisé une dizaine d’entreprises chinoises à acheter la H200, la deuxième puce d’IA la plus puissante de Nvidia. Cependant, aucune livraison n’a été effectuée. C’est probablement la percée que Huang recherchait en Chine.

Une partie de l’histoire des puces concerne les minéraux qui les composent, et sur ce point, la Chine détient un avantage qu’elle utilise habilement comme levier dans ses relations commerciales avec les États-Unis. Le représentant américain au commerce, Jamieson Greer, a déclaré à Bloomberg que les exportations de terres rares en provenance de Chine s’amélioraient, bien que Pékin tarde encore à approuver certaines licences d’exportation. Cependant, M. Greer a également indiqué que les contrôles à l’exportation des puces n’avaient pas constitué un élément majeur des discussions.

Quelques jours plus tard, la Maison Blanche a déclaré que la Chine répondrait aux préoccupations américaines concernant les pénuries dans la chaîne d’approvisionnement liées aux terres rares et à d’autres minéraux critiques, ainsi qu’aux inquiétudes relatives aux restrictions sur la vente d’équipements et de technologies de production et de traitement des terres rares. En réponse aux questions concernant cette déclaration, le ministère chinois du Commerce a indiqué que les deux parties avaient discuté de la question et s’efforceraient de résoudre leurs préoccupations respectives, pour autant qu’elles soient raisonnables et légitimes.

De plus, selon certaines informations, ByteDance aurait accepté d’acheter des millions de puces ASIC de Qualcomm destinées à son infrastructure d’IA et à ses systèmes d’agents en pleine expansion, un accord qui aurait pu être conclu lors de cette visite.

Le sommet Russie-Chine annonce un renforcement de la coopération dans les domaines de l’IA, de l’industrie et de l’économie numérique

Les entretiens entre Poutine et Xi les 19 et 20 mai 2026 ont abouti à un communiqué qui, d’emblée, est révélateur. Le document lui-même est assez long et, bien qu’il ne soit pas très technique, il contient néanmoins des signaux importants.

Poutine et Xi ont souligné que l’IA devait être développée et déployée de manière à servir le développement universel plutôt que des intérêts nationaux étroits. Dans le même temps, ils ont explicitement critiqué l’utilisation de l’IA par certains États comme instrument géopolitique visant à préserver ou à renforcer leur domination mondiale.

Les dirigeants ont également mis en avant la coopération croissante sur la dimension militaire de l’IA. La Russie et la Chine ont l’intention d’approfondir leur collaboration sur le développement et l’application de l’IA dans des contextes militaires, tant par le biais de canaux bilatéraux que dans le cadre de processus multilatéraux, y compris les discussions au sein du Groupe d’experts gouvernementaux sur les systèmes d’armes autonomes létales (LAWS).

Les deux parties se sont engagées à coordonner leurs positions sur les questions scientifiques et politiques liées à l’IA au sein des organisations internationales. Cela témoigne d’un effort visant à harmoniser leurs approches de manière plus systématique dans les forums mondiaux de normalisation et d’élaboration des normes. Il n’est pas inhabituel que la Chine et la Russie adoptent des positions similaires lors de négociations sur les questions numériques, mais il s’agissait là d’un signal géopolitique assez explicite.

La Chine et la Russie fusionneront leurs systèmes de navigation par satellite GLONASS et Beidou en un service mondial complémentaire, coopéreront à la coordination et à l’utilisation des fréquences radio et des créneaux orbitaux, et étendront leur coopération en matière d’Internet par satellite et d’Internet des objets.

La coopération en matière de logiciels libres est également apparue comme une priorité stratégique. Les deux pays prévoient d’explorer un mécanisme de coopération bilatérale en matière de logiciels et de promouvoir les technologies libres dans des secteurs clés. Cela s’inscrit dans le cadre d’efforts plus larges menés par les deux pays pour localiser les écosystèmes logiciels et réduire leur dépendance vis-à-vis des plateformes technologiques américaines.

Les parties ont convenu de renforcer leur coopération dans la lutte contre la cybercriminalité, saluant l’adoption par l’Assemblée générale des Nations unies de la Convention des Nations unies contre la cybercriminalité et s’engageant à sa mise en vigueur et à sa mise en œuvre rapides, y compris en travaillant sur un protocole additionnel visant à élargir son champ d’application et à renforcer la coopération internationale.

Elles se sont engagées à approfondir leur coopération stratégique en matière de sécurité de l’information et à coordonner leurs réponses aux menaces pesant sur les TIC. Elles ont également l’intention d’échanger leurs expériences en matière de réglementation législative de l’internet.

Elles ont souligné le rôle central de l’ONU dans la réponse aux menaces dans l’espace informationnel, après quoi les pays ont exprimé leur soutien aux travaux du Mécanisme mondial. Cela n’est pas non plus surprenant, étant donné que le Mécanisme mondial est issu du Groupe de travail à composition ouverte (OEWG), qui était lui-même une initiative russe. Les pays ont également exprimé leur soutien à l’élaboration de documents juridiques internationaux plus larges couvrant des questions telles que la sécurité des données et la résilience de la chaîne d’approvisionnement. Cela s’inscrit dans la lignée des efforts de longue date de la Chine pour mettre en place l’Initiative mondiale sur la sécurité des données.

Les parties ont convenu d’étendre leur coopération dans le domaine de l’économie numérique et du commerce électronique transfrontalier, parallèlement à une collaboration plus large dans des secteurs tels que l’automobile, l’aviation et l’extraction minière. Elles se sont engagées à renforcer la coordination en matière de protection de la propriété intellectuelle et de protection des consommateurs dans les services en ligne et transfrontaliers,

Elles ont également convenu d’approfondir la coopération en matière de commerce électronique par le biais de cadres régionaux, notamment l’Union économique eurasienne (UEE), l’initiative « La Ceinture et la Route », l’Organisation de coopération de Shanghai (OCS), l’APEC et l’Initiative renforcée de Tumangan. La coopération se concentrera sur la numérisation, la logistique, le commerce sans barrières, la connectivité et l’innovation, avec pour objectif plus large de stimuler l’intégration économique régionale, le commerce et l’emploi.

Cette partie n’est pas surprenante, étant donné que la Chine et la Russie ont à plusieurs reprises présenté le commerce électronique, l’économie numérique et la coopération en matière de TIC comme des piliers stratégiques de leur partenariat depuis au moins le milieu des années 2010 dans les contextes de l’OCS et de la « Belt and Road ».

Comparaison des deux sommets

Il n’y a pas eu de communiqué conjoint à l’issue de la rencontre entre Xi et Trump, tandis que le sommet Xi-Poutine a donné lieu à un communiqué détaillé et volumineux. Ce simple fait est assez révélateur. Xi et Poutine ont également signé plus de 20 accords couvrant l’énergie, le commerce, la science et la technologie, ainsi que les infrastructures, alors qu’on ignore encore combien d’accords Xi et Trump ont signés.

Dans le contexte américano-chinois, les commentaires formulés par Bessent lors de la visite de Trump en Chine, soulignant que « la raison pour laquelle nous sommes en mesure d’avoir des discussions constructives avec les Chinois sur l’IA, c’est parce que nous sommes en tête », mettent en évidence la concurrence technologique persistante entre les deux pays. Bessent a également admis sans détour : « Je ne pense pas que nous aurions les mêmes discussions s’ils avaient une telle longueur d’avance sur nous. » Le président Trump a également reporté la signature du décret sur l’IA et la cybersécurité, affirmant qu’il ne souhaitait pas que de nouvelles règles ralentissent le leadership américain en matière d’IA ou affaiblissent son avantage concurrentiel sur la Chine, ce qui renforce le cadre concurrentiel. Cela s’oppose directement à la coopération avec la Russie et à la future coordination sur les questions scientifiques et politiques liées à l’IA au sein des organisations internationales.

L’engagement de la Chine avec la Russie a pris une forme plus coopérative et tournée vers l’avenir. Les deux parties ont convenu de promouvoir activement la coopération en matière d’extraction minière conjointe et d’élaboration de normes écologiques. La Russie détient certaines des plus grandes réserves mondiales de terres rares – elle se classe au cinquième rang mondial – mais reste limitée par des capacités restreintes en matière d’extraction, de raffinage et de traitement, un domaine dans lequel elle a tout à gagner de l’expertise technologique et industrielle chinoise.

Dans l’ensemble, la différence entre les résultats obtenus et l’importance accordée aux enjeux montre que la relation entre la Russie et la Chine semble plus à l’aise pour aborder des domaines sensibles tels que la coopération en matière d’IA et les applications militaires, tandis que l’engagement entre les États-Unis et la Chine reste plus contraint et concurrentiel tant dans le ton que dans la portée.

Le mois dernier à Genève

Geneva Cyber Week 2026

L’Institut des Nations unies pour la recherche sur le désarmement (UNIDIR) et le Département fédéral des affaires étrangères (DFAE) ont co-organisé la Geneva Cyber Week du 4 au 8 mai 2026, réunissant des décideurs politiques, des diplomates, des experts techniques, des dirigeants d’entreprise, des universitaires et des représentants de la société civile dans divers lieux à Genève et en ligne pour une semaine de discussions sur la stabilité cybernétique, la résilience, la gouvernance, la numérisation et les implications sécuritaires des technologies émergentes, y compris l’IA.

De retour après sa première édition, l’événement s’est positionné comme une réponse à un environnement cybernétique et géopolitique plus fragile. Organisée sous le thème « Faire progresser la coopération mondiale dans le cyberespace », la Geneva Cyber Week 2026 s’est déroulée dans un contexte d’insécurité cybernétique croissante, de tensions géopolitiques s’intensifiant et d’évolution technologique rapide. Le programme comprenait près de 90 événements et a renforcé le rôle de Genève en tant que centre de cyberdiplomatie, de coopération internationale et de gouvernance numérique.

Dans le cadre de la Geneva Cyber Week, l’UNIDIR a organisé la Conférence sur la stabilité cybernétique 2026, les 4 et 5 mai à Genève et en ligne, réunissant des représentants des gouvernements, des organisations internationales, du secteur privé, du monde universitaire et de la société civile pour débattre de la sécurité des TIC et de la gouvernance cybernétique. Sous le thème « La cybergouvernance à l’ère de la révolution technologique : leçons du passé, réalités du présent et frontières de l’avenir », les discussions ont porté sur la manière dont les cadres internationaux de cyberstabilité s’adaptent à l’évolution technologique rapide, notamment l’IA et l’informatique quantique, tout en tirant les leçons des processus de cyberdiplomatie passés et des défis actuels en matière de sécurité.

Réunion d’experts pluriannuelle sur l’investissement, l’innovation et l’entrepreneuriat pour le renforcement des capacités productives et le développement durable, 12e session

La réunion d’experts pluriannuelle de la CNUCED sur l’investissement, l’innovation et l’entrepreneuriat pour le renforcement des capacités productives et le développement durable s’est réunie pour sa douzième session les 4 et 5 mai. Les experts ont averti que l’IA et d’autres technologies stratégiques sont en train de remodeler les schémas d’investissement mondiaux, concentrant les capitaux dans une poignée de secteurs et de pays tout en laissant de nombreuses économies en développement à la traîne. Les discussions lors de la réunion ont porté sur la manière dont les pays en développement peuvent être compétitifs dans les secteurs liés à l’IA, renforcer leurs écosystèmes d’innovation nationaux et veiller à ce que les investissements axés sur l’IA se traduisent par des gains de développement plus larges.

Ouverture à Genève de la conférence de l’OSCE intitulée « Anticiper les technologies – pour un avenir sûr et humain »

La présidence suisse de l’Organisation pour la sécurité et la coopération en Europe a ouvert le 7 mai à Genève une conférence de haut niveau de deux jours sur les technologies anticipatives. L’événement a examiné comment la prospective, le dialogue et la coopération internationale peuvent contribuer à réduire les malentendus, à instaurer la confiance et à renforcer la sécurité dans toute la région de l’OSCE dans un contexte d’évolution technologique rapide.

Le programme comprenait des discussions sur l’anticipation des changements technologiques et leur impact géopolitique, la sécurité de l’eau et de l’énergie à l’ère numérique, ainsi que le rôle de l’IA dans l’alerte précoce et la prévention des conflits.

La conférence a également mis en avant le rôle de Genève en tant que point de rencontre entre science et diplomatie, notamment à travers des institutions telles que le CERN, le Geneva Science and Diplomacy Anticipator et l’Open Quantum Institute.

Cet événement s’inscrit dans le cadre de la priorité de la présidence visant à relier l’anticipation scientifique et technologique à l’action politique.

L’OMC a repris les négociations alors que 19 membres soutiennent l’engagement en faveur d’un moratoire sur le commerce électronique

Le Conseil général de l’OMC s’est réuni à Genève le 8 mai pour la première fois depuis la 14e Conférence ministérielle (CM14), après que les négociateurs à Yaoundé ont manqué de peu de parvenir à des accords sur plusieurs dossiers majeurs, notamment l’avenir du moratoire de longue date sur le commerce électronique et la réforme plus large de l’OMC.

La nouvelle présidente, l’ambassadrice Clare Kelly, a déclaré que les membres restaient déterminés à préserver le fragile équilibre atteint lors des négociations au Cameroun et à éviter un retour aux positions antérieures.