AI is reshaping the expectations placed on organisations, yet many local governments in the US continue to rely on procurement systems designed for a paper-first era.

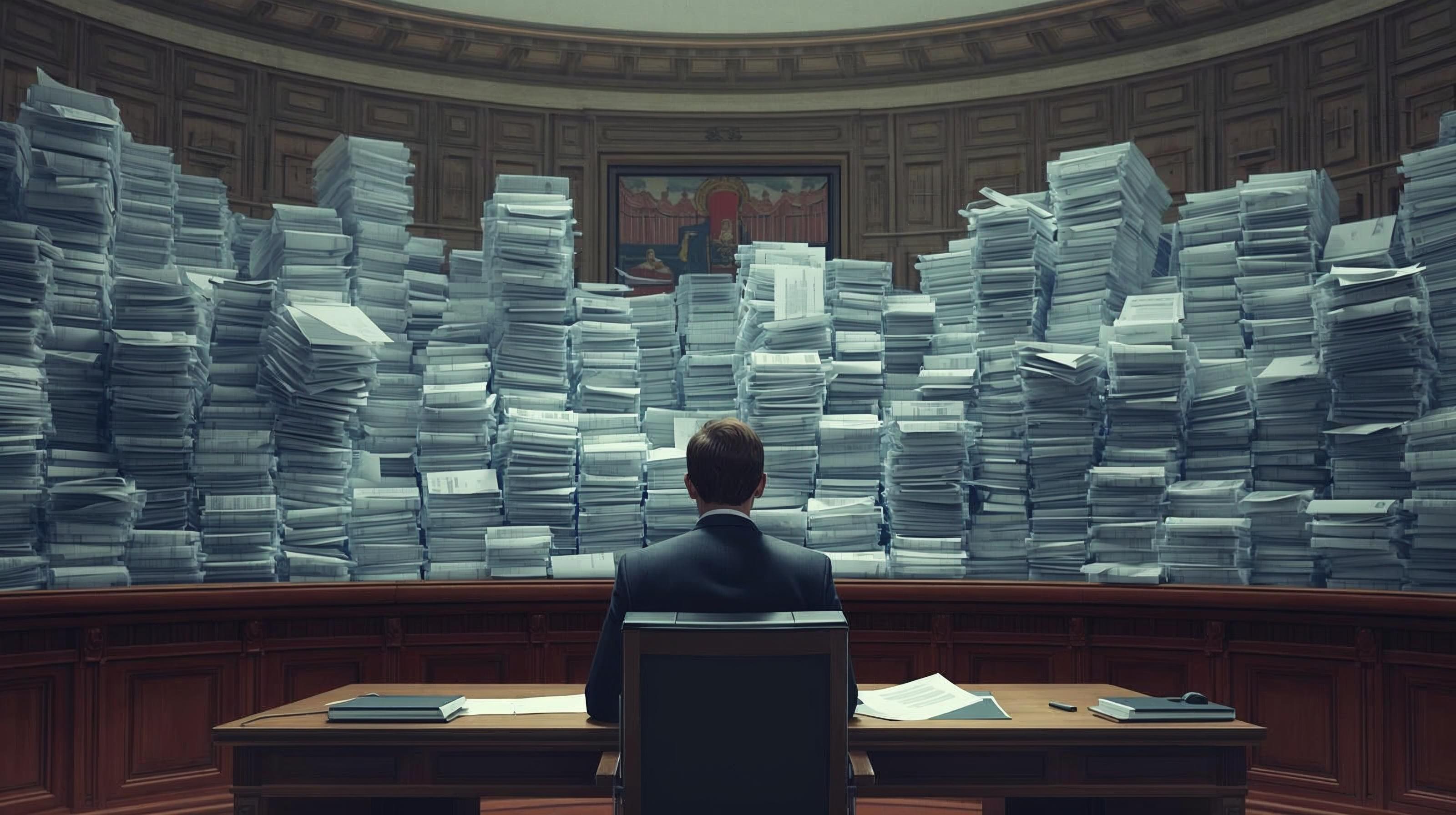

Sealed envelopes, manual logging and physical storage remain standard practice, even though these steps slow essential services and increase operational pressure on staff and vendors.

The persistence of paper is linked to long-standing compliance requirements, which are vital for public accountability. Over time, however, processes intended to safeguard fairness have created significant inefficiencies.

Smaller businesses frequently struggle with printing, delivery, and rigid submission windows, and the administrative burden on procurement teams expands as records accumulate.

Gradual adoption of digital submission reduced logistical barriers while strengthening compliance. Electronic bids could be time-stamped, access monitored, and records centrally managed, allowing staff to focus on evaluation rather than handling binders and storage boxes.

Vendor participation increased once geographical and physical constraints were removed. The shift also improved resilience, as municipalities that had already embraced digital procurement were better equipped to maintain continuity during pandemic disruptions.

Electronic records now provide a basis for responsible use of AI. Digital documents can be analysed for anomalies, metadata inconsistencies, or signs of manipulation that are difficult to detect in paper files.

Rather than replacing human judgment, such tools support stronger oversight and more transparent public administration. Modernising procurement aligns government operations with present-day realities and prepares them for future accountability and technological change.

Would you like to learn more about AI, tech and digital diplomacy? If so, ask our Diplo chatbot!