OpenAI has published a new overview of the safety measures built into Sora 2 and the Sora app, setting out how the company says it is approaching provenance, likeness protection, teen safeguards, harmful-content filtering, audio controls, and user reporting tools. The Sora team published the note on 23 March 2026.

OpenAI says every video generated with Sora includes visible and invisible provenance signals, and that all videos also embed C2PA metadata. The company adds that many outputs feature visible moving watermarks that include the creator’s name, while internal reverse-image and audio search tools are used to trace videos back to Sora.

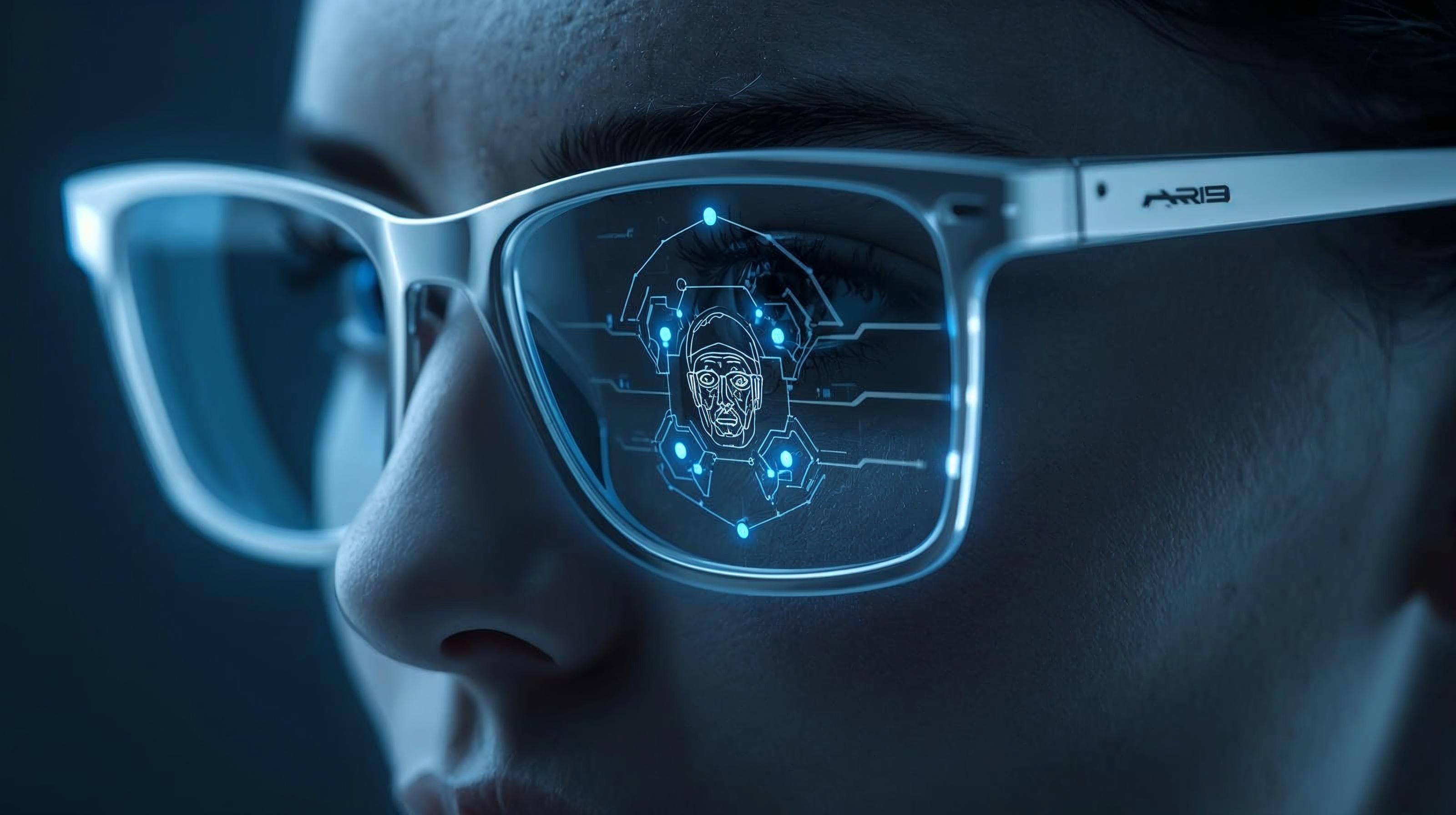

A substantial part of the update focuses on likeness and consent. OpenAI says users can upload images of people to generate videos, but only after attesting that they have consent from the people featured and the right to upload the media. OpenAI also says image-to-video generations involving people are subject to stricter safeguards than Sora Characters, and that images including children and young-looking persons face stricter moderation. Shared videos generated from such images will always carry watermarks, according to the company.

OpenAI also sets out controls linked to its characters feature, which it says is intended to give users stronger control over their likeness, including both appearance and voice. According to the company, users can decide who can use their characters, revoke access at any time, and review, delete, or report videos featuring their characters. OpenAI says it also applies additional restrictions designed to limit major changes to a person’s appearance, avoid embarrassing uses, and maintain broadly consistent identity presentation.

Protections for younger users form another part of the update. OpenAI says teen accounts are subject to stronger limitations on mature output, that age-inappropriate or harmful content is filtered from teen feeds, and that adult users cannot initiate direct messages with teens. Parental controls in ChatGPT can also be used to manage teen messaging permissions and to select a non-personalised feed in the app, while default limits apply to continuous scrolling for teens.

OpenAI says harmful-content controls operate at both creation and distribution stages. Prompt and output checks are used across multiple video frames and audio transcripts to block content including sexual material, terrorist propaganda, and self-harm promotion. OpenAI also says it has tightened policies for video generation compared with image generation because of added realism, motion, and audio, while automated systems and human review are used to monitor feed content against its global usage policies.

Audio generation is treated separately in the note. OpenAI says generated speech transcripts are automatically scanned for possible policy violations, and that prompts intended to imitate living artists or existing works are blocked. The company also says it honours takedown requests from creators who believe an output infringes their work.

User controls and recourse are presented as the final layer. OpenAI says users can choose whether to share videos to the feed, remove published content, and report videos, profiles, direct messages, comments, and characters for abuse. Blocking tools are also available, according to the company, to stop other users from viewing a profile or posts, using a character, or contacting someone through direct message.

OpenAI’s post is framed as a product-safety explanation rather than an independent assessment of the effectiveness of the measures in practice. Much of the note describes controls that the company says it has built into Sora 2, but it does not provide external evaluation data in the published summary.

Would you like to learn more about AI, tech, and digital diplomacy? If so, ask our Diplo chatbot!