According to Goldman Sachs, the surge in AI is set to transform global energy markets, with data centres expected to consume 165% more electricity by 2030 compared to 2023. The bank reports that US spending on data centre construction has tripled in just three years, while occupancy rates at existing facilities remain close to record highs.

The demand is driven by hyperscale operators like Amazon Web Services, Microsoft Azure, and Google Cloud, which are rapidly expanding their infrastructure to meet the power-hungry needs of AI systems.

Global data centres use about 55 gigawatts of electricity, more than half of which supports cloud computing. Traditional workloads like email and storage still account for a third, while AI represents just 14%.

However, Goldman Sachs projects that by 2027, overall consumption could rise to 84 gigawatts, with AI’s share growing to over a quarter. That shift is straining grids and pushing operators toward new solutions as AI servers can consume ten times more electricity than traditional racks.

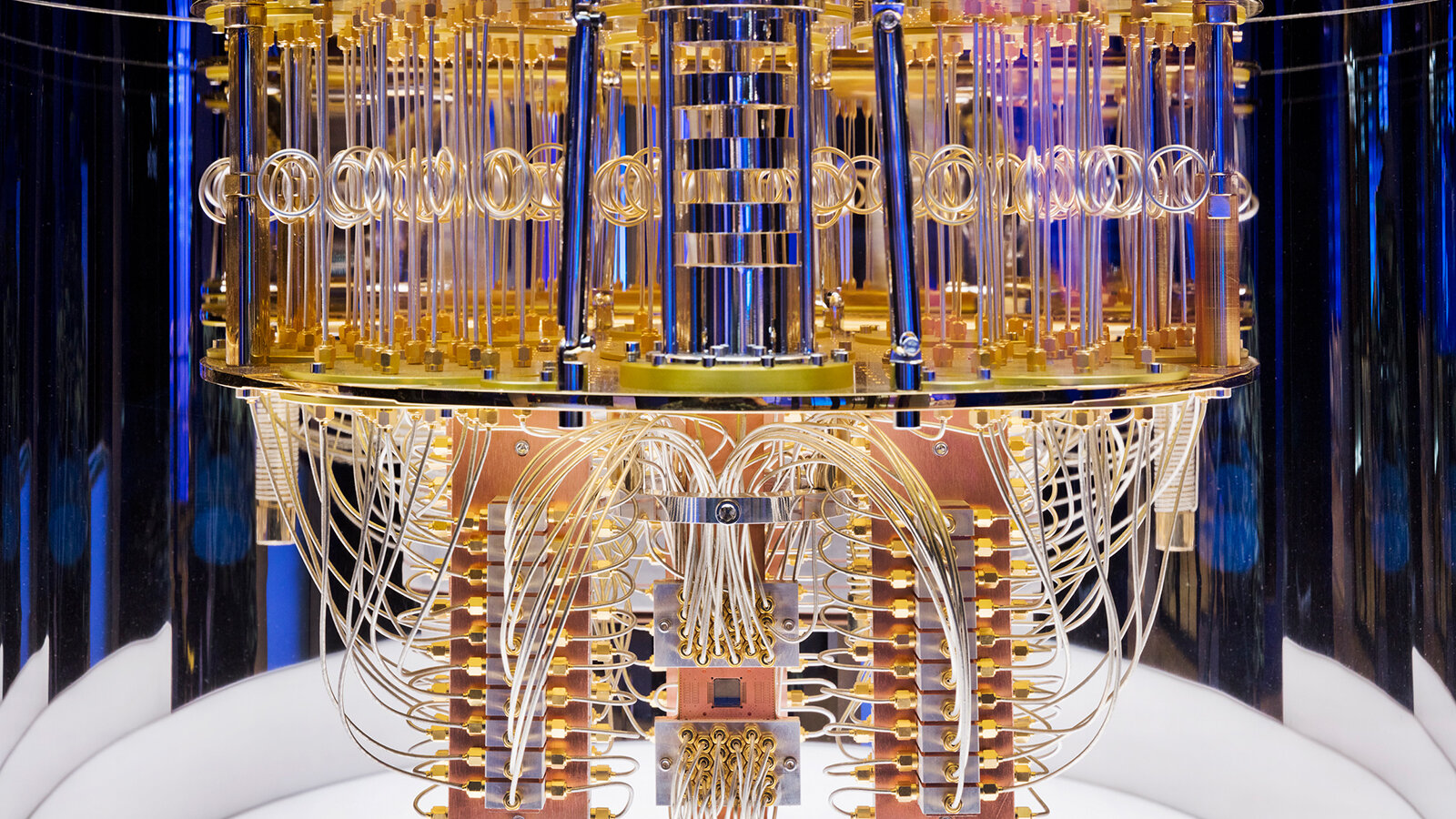

Meeting this demand will require massive investment. Goldman Sachs estimates that global grid upgrades could cost as much as US$720 billion by 2030, with US utilities alone needing an additional US$50 billion in new generation capacity for data centres.

While renewables like wind and solar are increasingly cost-competitive, their intermittent output means operators lean on hybrid models with backup gas and battery storage. At the same time, technology companies are reviving interest in nuclear power, with contracts for over 10 gigawatts of new capacity signed in the US last year.

The expansion is most evident in Europe and North America, with Nordic countries, Spain, and France attracting investment due to their renewable energy resources. At the same time, hubs like Germany, Britain, and Ireland rely on incentives and established ecosystems. Yet, uncertainty remains.

Advances like DeepSeek, a Chinese AI model reportedly as capable as US systems but more efficient, could temper power demand growth. For now, however, the trajectory is clear, AI is reshaping the data centre industry and the global energy landscape.

Would you like to learn more about AI, tech and digital diplomacy? If so, ask our Diplo chatbot!