The expansion of its likeness detection technology to the entertainment industry has been announced by YouTube, extending access beyond content creators to talent agencies, management companies and the individuals they represent.

The move is part of a broader effort by the platform to address the growing misuse of AI to generate misleading or unauthorised videos of public figures. By extending the tool to entertainment industry stakeholders, YouTube is signalling that AI-driven impersonation is no longer treated as a niche creator issue but as a broader identity and rights problem.

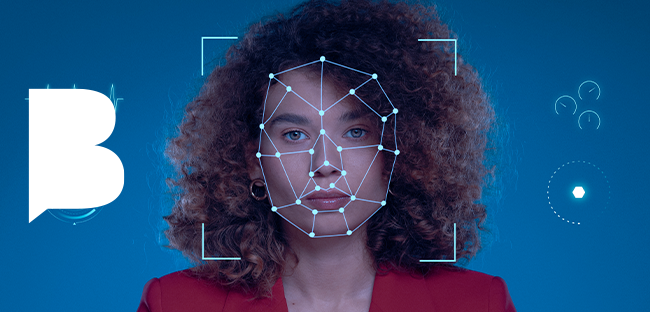

The system works in a way broadly comparable to Content ID, allowing eligible users to identify videos that use AI to replicate a person’s face or likeness. Once such content is detected, individuals can request its removal through YouTube’s existing privacy complaint process.

The rollout has been developed with input from major industry players, including Creative Artists Agency, United Talent Agency, William Morris Endeavor, and Untitled Management. Those partnerships are intended to help YouTube refine how the system works in practice and ensure it reflects the needs of artists and rights holders dealing with synthetic media.

Importantly, access to the tool is not limited to people who actively run YouTube channels. Celebrities and public figures can use it even without a direct creator presence on the platform, extending its reach across a much broader part of the entertainment ecosystem.

The significance of the update lies in how platforms are beginning to treat AI impersonation as a governance issue rather than merely a content-moderation problem.

As synthetic media tools become easier to use and more convincing, technology companies are under growing pressure to provide faster and more credible mechanisms for detecting misuse, protecting identity rights, and limiting deceptive content.

YouTube’s latest move shows that platform responses are becoming more structured and rights-based, especially in sectors where a person’s likeness is closely tied to reputation, image, and commercial value. The bigger question now is whether such tools will prove effective enough to keep pace with the scale and speed of AI-generated impersonation online.

Would you like to learn more about AI, tech and digital diplomacy? If so, ask our Diplo chatbot!