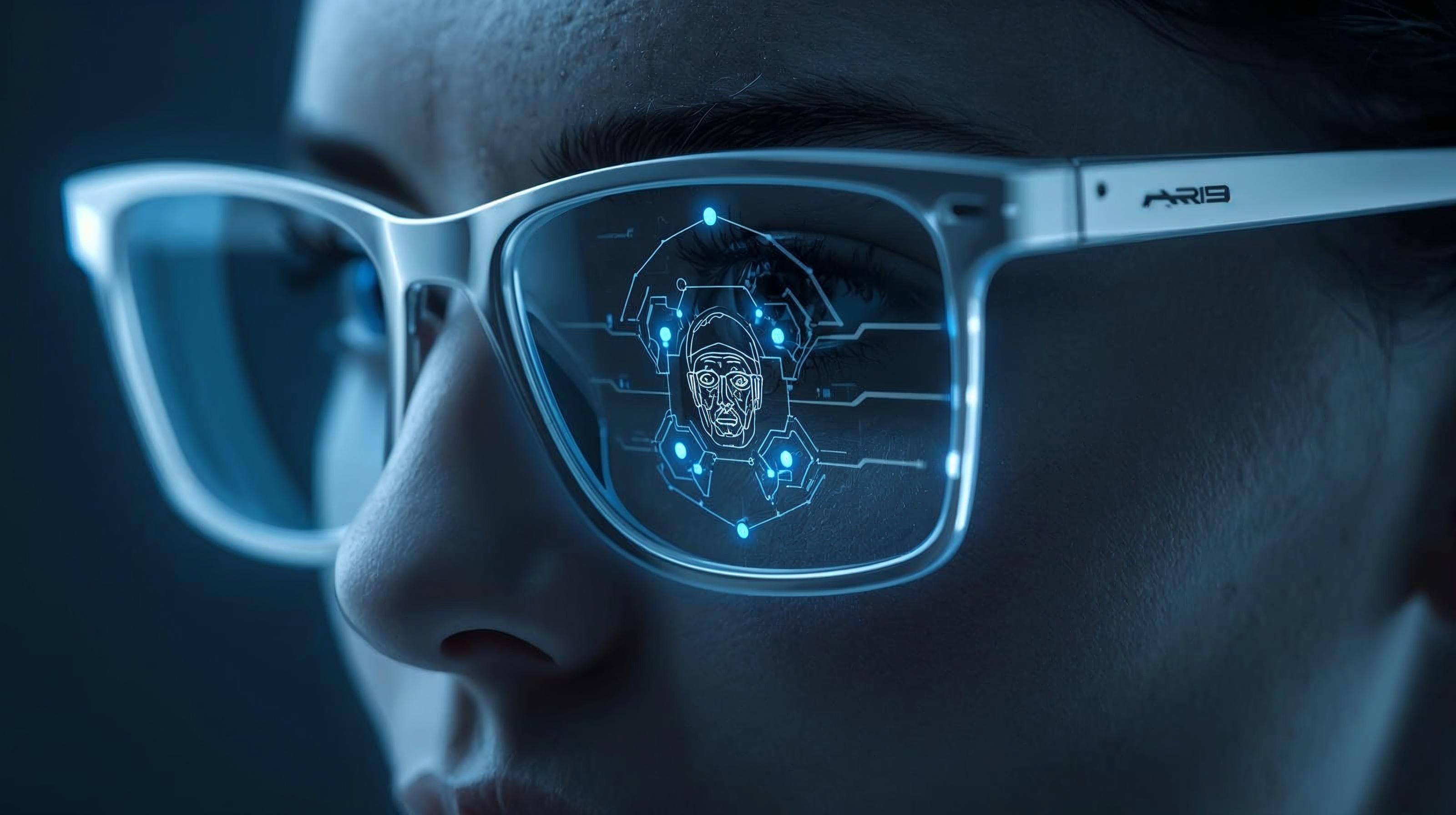

Three Democratic senators have raised concerns about Meta’s reported exploration of facial recognition in its smart glasses, warning that it could normalise public surveillance. In a letter to CEO Mark Zuckerberg, Senators Edward Markey, Ron Wyden, and Jeff Merkley asked about consent, biometric data, and the risks of misuse.

The lawmakers said the proposed feature ‘risks normalising mass surveillance at a moment when the federal government is using similar tools to intimidate protesters and chill speech. Although facial recognition may offer real benefits for blind and visually impaired users, Meta’s history of failing to protect user privacy raises serious questions about its plan to deploy this technology in its smart glasses.’

‘Americans do not consent to biometric data collection simply by walking down a public street, entering a café, or standing in a crowd,’ the senators added. ‘Yet, the deployment of this technology would appear to do exactly that – subjecting countless individuals to covert identification without notice, without consent, and without any meaningful opportunity to opt out.’ They warned that such practices would erode longstanding expectations of privacy in public spaces, effectively eliminating public anonymity.’

Concerns grew after reports of US Border Patrol and ICE agents using Meta smart glasses. While there is no evidence of facial recognition use, senators argue that adding identification tools to eyewear could expand undetectable surveillance. The letter questions if Meta might link facial data with information from its platforms, enabling real-time identification tied to profiles. Lawmakers warn that this could increase the risks of harassment and targeting.

Meta had previously discontinued facial recognition on Facebook in 2021, citing societal concerns. The senators argue that reintroducing similar technology in wearable devices suggests a shift rather than a retreat. ‘Five years later, Meta appears less worried about those societal concerns and is reportedly planning to deploy facial recognition technology in one of the most dangerous possible settings,’ they wrote.

‘Moreover,’ they continued, ‘Meta is apparently aware of the risks with this technology,’ noting that ‘an internal memo recommended launching the product ‘during a dynamic political environment where many civil society groups that we would expect to attack us would have their resources focused on other concerns.’

‘In other words,’ the senators added, ‘Meta appears to recognise the serious privacy and civil liberties risks of facial recognition but thinks it can avoid attention by slipping the once-abandoned, ethically fraught product back onto the market while the world is distracted by the Trump administration’s daily chaos.’

The senators have asked Meta to clarify how it would obtain consent from both users and bystanders, how long it would retain biometric data, whether it would use it to train AI models, and whether it could share it with law enforcement, including the Department of Homeland Security. The company has been given until 6 April to respond.

Would you like to learn more about AI, tech, and digital diplomacy? If so, ask our Diplo chatbot!