Partnerships across the robotics sector are positioning NVIDIA at the centre of what is increasingly described as ‘physical AI’, a shift towards intelligent machines capable of perceiving, reasoning and acting in real environments.

A new generation of tools, including NVIDIA Cosmos world models and updated NVIDIA Isaac simulation frameworks, aims to support developers in training and validating robots before deployment.

These systems enable companies to simulate complex environments, reducing the risks and costs of real-world testing.

Industrial robotics leaders such as ABB Robotics, KUKA, and FANUC are integrating NVIDIA technologies into digital twin environments, enabling more accurate modelling of production lines and automation systems.

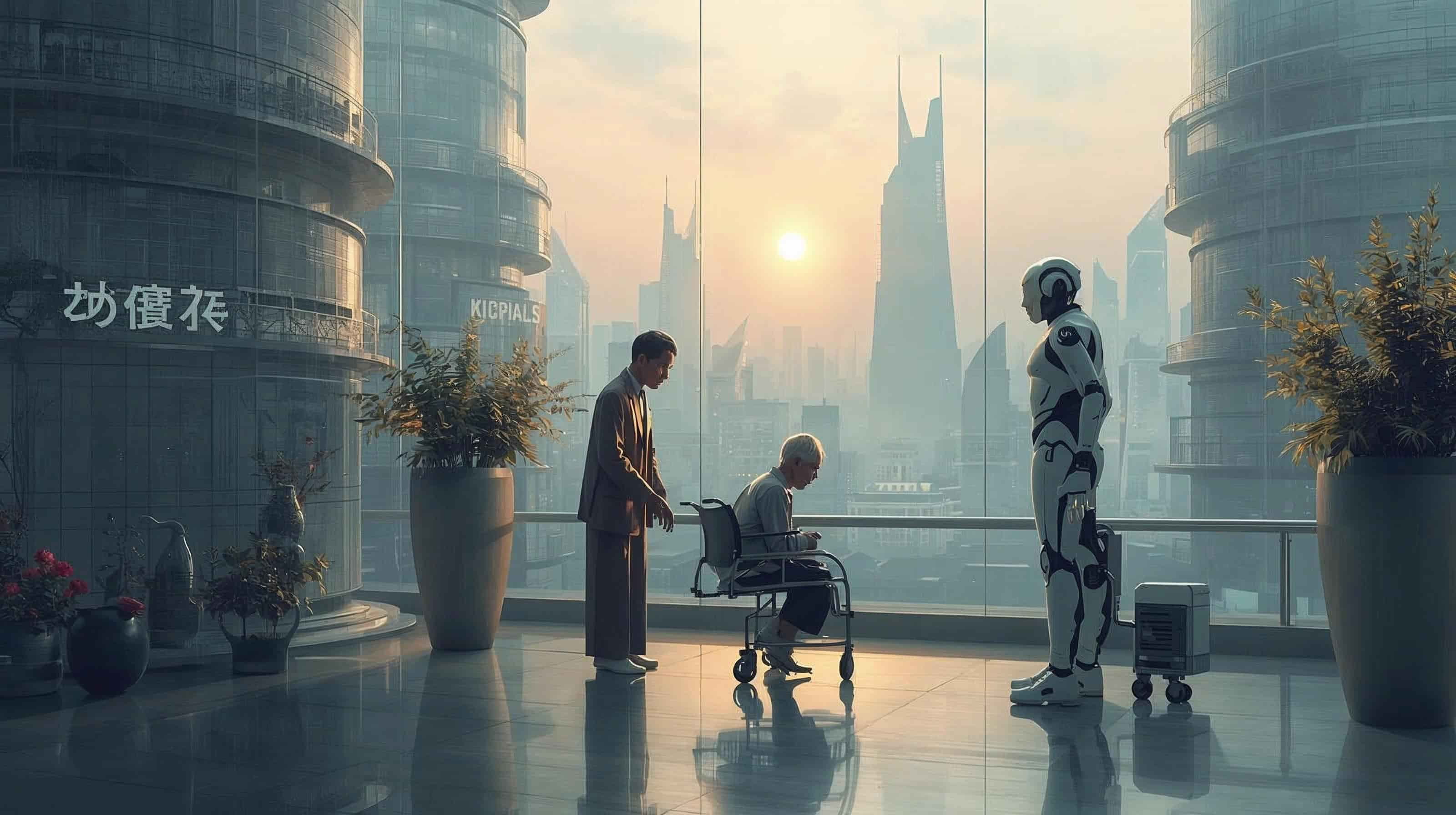

Advances are also extending into humanoid robotics, where companies are using AI models to develop machines capable of more flexible and adaptive behaviour.

New foundation models, including GR00T systems, are designed to give robots general-purpose capabilities instead of limiting them to specific tasks.

Healthcare and logistics represent additional areas of deployment, with robotics platforms being tested in surgical systems, warehouse automation and manufacturing environments. These applications highlight how physical AI could reshape industries requiring precision, safety and scalability.

Growing collaboration across cloud providers, manufacturers and AI developers suggests that robotics is moving toward a more integrated ecosystem, where simulation, data generation and deployment are increasingly interconnected.

Would you like to learn more about AI, tech and digital diplomacy? If so, ask our Diplo chatbot!