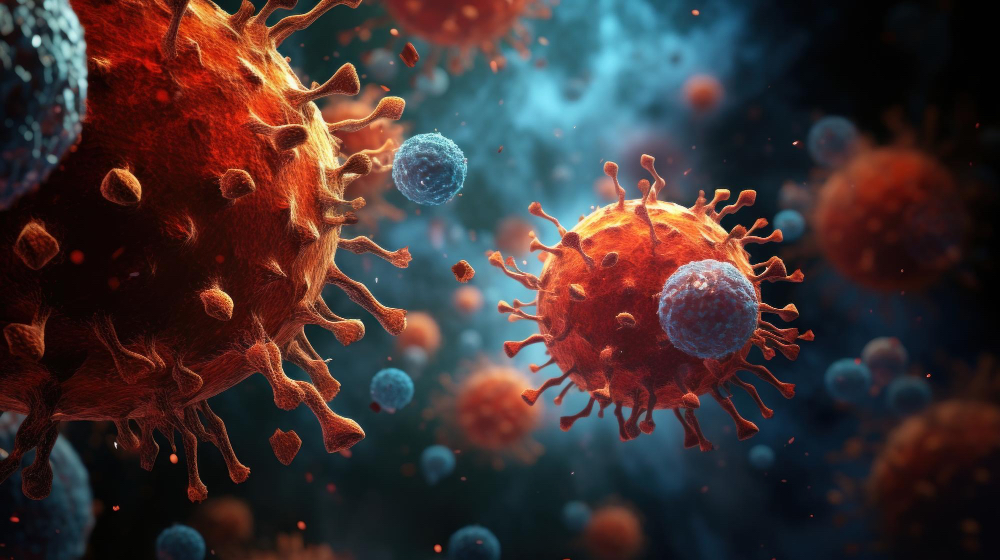

Researchers at the University of Tokyo have created scHDeepInsight, an AI platform that quickly and reliably classifies immune cells from single-cell RNA data. The system turns genetic profiles into images and uses a hierarchy-aware CNN to identify broad immune types and finer subtypes.

By reflecting the natural structure of the immune system, the tool improves accuracy and consistency compared with previous methods.

scHDeepInsight uses hierarchical learning to mirror the immune system’s ‘family tree’ and image-based gene representation to capture complex gene relationships. It also includes built-in analytics to highlight key genes influencing cell behaviour.

The platform labels thousands of cells within minutes, a task that can take hours or days manually, and ensures rare cell populations are correctly identified through adjusted training processes.

While primarily a research tool, scHDeepInsight provides a healthy baseline for comparing disease-related immune changes, aiding studies in cancer, infections, and autoimmune conditions.

Researchers can apply it to patient samples to identify deviations from normal patterns, though clinical interpretation requires further validation. The system is already available as a downloadable package for laboratory use.

The team aims to expand scHDeepInsight to other biological areas, supporting clinical research and potentially discovering new cell types. Integrating AI with experimental validation, the tool aims to improve understanding of cellular systems and speed up immunology discoveries.

Would you like to learn more about AI, tech and digital diplomacy? If so, ask our Diplo chatbot!