An opinion article published by the International Association of Privacy Professionals says India’s data protection and AI governance environment is facing growing pressure as compliance work around the Digital Personal Data Protection Act (DPDPA) unfolds, court challenges continue, and regulators widen oversight into new sectors. The piece, published on 26 March, is labelled as an opinion article and includes an editor’s note stating that the IAPP is policy neutral and publishes contributed opinion pieces to reflect a broad spectrum of views.

The article says several legal and regulatory developments are unfolding simultaneously. One example cited is a public interest litigation filed before India’s Supreme Court by journalist Geeta Seshu and the Software Freedom Law Centre, India, challenging parts of the DPDPA on constitutional and rights-related grounds. According to the piece, the Supreme Court later issued a notice to the Government of India on 12 March.

Concerns outlined in the article include the absence of journalistic exemptions, the lack of compensation for data breach victims when penalties are imposed to the government, broad state powers to exempt departments from the law, and questions about the independence of the Data Protection Board given the government’s control over appointments. The article notes that similar petitions had already been filed, but says this was the first time the court issued notice to the government.

The article also turns to proceedings before the Kerala High Court involving privacy concerns about biometric and personal data collected through Digi Yatra, a not-for-profit foundation that operates airport passenger-processing infrastructure in India. According to the piece, a public interest litigation filed by C R Neelakandan asked for a temporary restraint on the sharing of collected personal data and its commercial use without proper authorisation.

The article says the Kerala High Court issued notice to the Digi Yatra Foundation and sought clarification from the government on whether the Data Protection Board had been established to oversee such matters.

Alongside the litigation, the opinion piece points to government efforts to show legal preparedness for AI-related risks. It says Electronics and Information Technology Minister Ashwini Vaishnaw outlined existing safeguards during the ongoing parliamentary session, referring to the Information Technology Act, the DPDPA, and subordinate rules, along with published guidelines on AI governance, toy safety, harmful content, awareness-building measures, and cyber safety.

Cybersecurity developments also feature in the article. It says the Indian Computer Emergency Response Team, working with the SatCom Industry Association, issued guidelines on 26 February for space, including satellite communications. According to the piece, the framework is intended to strengthen resilience in India’s space ecosystem.

It applies to covered entities, including government agencies, satellite service providers, ground station operators, terminal equipment vendors, and private space entities. Incident reporting within six hours and annual audits are among the measures described.

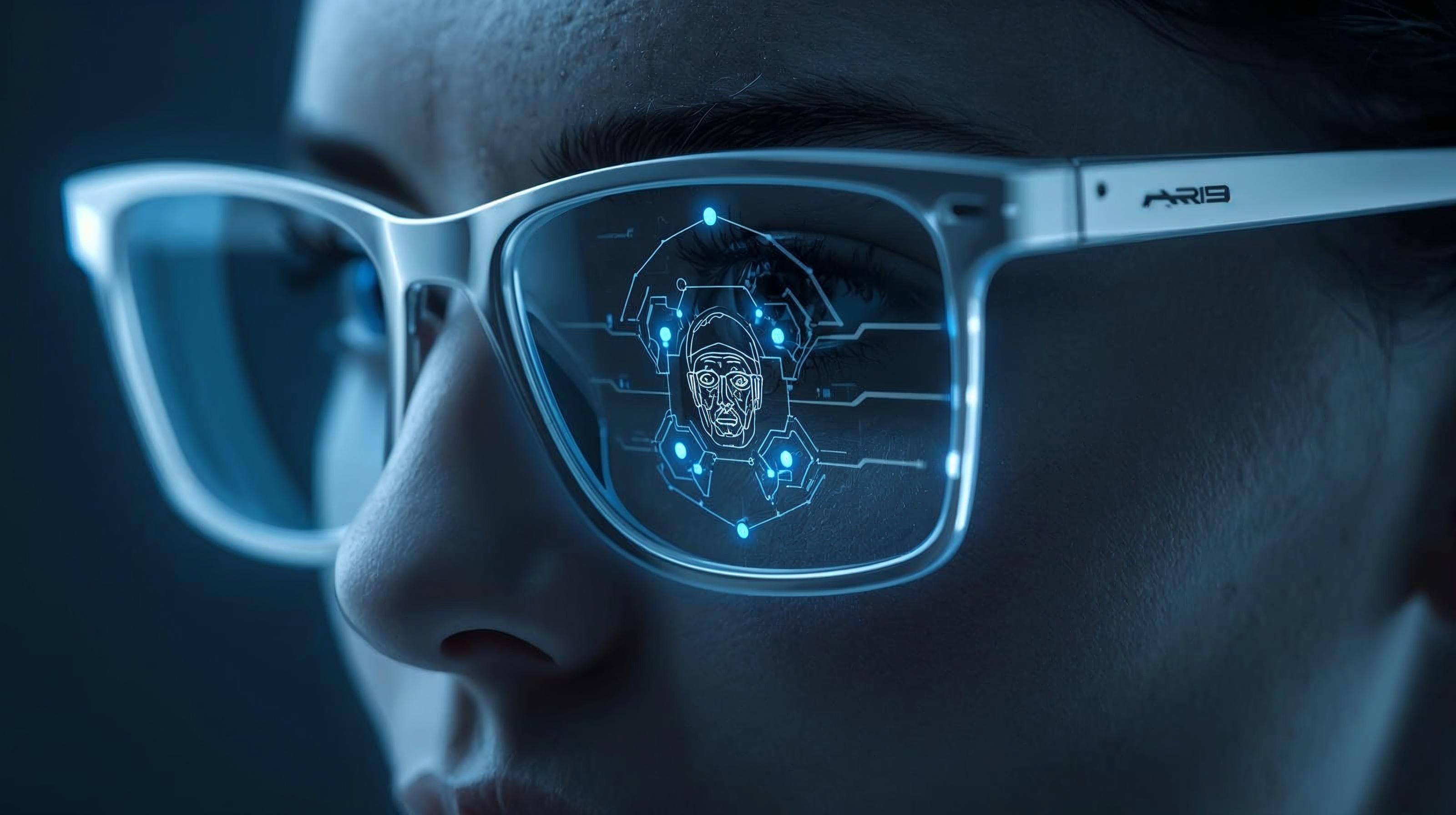

A further section of the article draws on Thales’ 2026 Data Threat Report. The piece says 64% of surveyed organisations in India identified AI-driven transformation as their biggest security risk, while 55% said they had to deal with reputational damage caused by AI-generated misinformation. It also says 65% reported deepfake-driven attacks, 35% had a complete view of their data, and 36% could fully classify their data.

Would you like to learn more about AI, tech, and digital diplomacy? If so, ask our Diplo chatbot!