March 2026 in retrospect

In our March 2026 Monthly newsletter, we observed the deadlock at WTO MC14 over the WTO e-commerce moratorium which led to its lapse, even as a coalition advanced a plurilateral digital trade deal. We examined what it means and what comes next.

Two recent US jury verdicts found Meta and YouTube liable for harms to minors, including exposure to sexual content and social media addiction. Taken together, the cases move beyond questions of content moderation and into the design of the platforms themselves.

The long-awaited Global Mechanism has finally launched, creating the UN’s first permanent forum on ICT security since 1998—but its inaugural session left many questions about how it will actually work. Here’s why it matters and what to watch as the Mechanism takes shape.

Will we one day buy intelligence on a meter? It is not yet certain, but the mere idea raises questions about control, access, and how we measure and consume intelligence in the future.

Plus: March’s top digital policy developments and a Geneva wrap-up.

Snapshot: The developments that made waves in March

Technologies

A new five-year development plan approved by lawmakers in Beijing centres on innovation and advanced technology to drive future economic growth and global leadership, prioritising AI, robotics, aerospace, biotech, and quantum computing while reducing reliance on foreign tech. It also boosts funding, with science spending set to rise by ~10% annually and overall R&D by at least 7%.

The UK government has announced up to £2 billion for quantum technologies, including more than £1 billion over the next four years, alongside a new procurement programme called ProQure to help scale quantum computing in the UK. The funding will support several areas: over £500 million for quantum computing, £125 million for quantum networking, and £205 million for quantum sensing and navigation, plus smaller allocations for research hubs, infrastructure, skills, and commercialisation.

The EU has opened a €180 million funding call to strengthen the resilience of subsea internet cables by supporting backup systems, alternative routes, and redundancy measures. The funding is meant to reduce the risk of outages and external threats to critical undersea infrastructure, reflecting the EU’s growing concern with digital resilience, cybersecurity, and technological sovereignty.

China has approved NEO, a brain–computer interface developed by Neuracle, for use beyond clinical trials to help people with severe paralysis regain hand movement. The implant reads brain signals when users imagine moving their hand and translates them into commands for a robotic glove, with early trial results showing improved ability to perform everyday tasks such as grasping, eating, and drinking.

Security

US President Donald Trump released his administration’s national cybersecurity strategy, outlining priorities across six policy areas: offensive and defensive cyber operations, federal network security, critical infrastructure protection, regulatory reform, emerging technology leadership (including in AI), and workforce development.

Trump also signed an executive order the same day, directing the attorney general to prioritise cybercrime prosecution, tasking agencies with reviewing tools to counter international criminal organisations, and assigning the Department of Homeland Security expanded training responsibilities. The strategy document spans five pages of substantive text, with administration officials describing it as intentionally high-level. The White House stated that more detailed implementation guidance would follow.

Pro-Iranian hacker group Handala claimed responsibility for a cyberattack on US medical device giant Stryker. The group has stated that the cyberattack is retaliation for a missile strike on an elementary school in Iran. Stryker confirmed the cyberattack in a statement, noting that order processing, manufacturing and shipping are disrupted, but that connected products have not been impacted. The FBI then seized four websites tied to Handala, the pro-Iranian hacking group that claimed responsibility for the attack, and to Iran’s Ministry of Intelligence and Security (MOIS).

Iran’s Revolutionary Guard has threatened to target major US tech companies, including Apple, Google, Meta, Microsoft, Intel, Oracle, Nvidia, Tesla, and Palantir, if more Iranian leaders are killed, accusing the companies of helping identify assassination targets. Iran’s army also claimed to have targeted Israeli communications, telecommunications and industrial centres in response to attacks on Iranian infrastructure.

Long constrained by a defensive security doctrine, Japan will introduce ‘hack-back’ powers from October. The change comes around as part of Japan’s ‘Active Cyber Defence’ law, which was passed in 2025 and is rolling out in incremental stages through 2027.

The EU has imposed sanctions over cyber attacks targeting its member states and partners, listing China-based Integrity Technology Group and Anxun Information Technology, as well as Iran-based Emennet Pasargad, along with Anxun’s co-founders. The sanctions entail an asset freeze and a travel ban for the listed individuals. The EU citizens and entities are additionally prohibited from making funds available to the designated companies.

Authorities in the Netherlands reported that hackers—believed to be linked to Russia—have launched large-scale phishing operations aimed at diplomats, military personnel, government officials, and journalists. Instead of breaking the apps’ encryption, attackers trick users into sharing verification codes or linking devices, allowing them to take over accounts and access sensitive conversations.

Portugal’s intelligence service has issued a similar alert, describing a global campaign by foreign state-backed actors seeking access to the messaging accounts of officials and others with privileged information. Once inside an account, attackers can read chats, access shared files, and use the compromised profile to target additional victims through further phishing attempts.

The EU has launched its ProtectEU counterterrorism agenda to strengthen preparedness against evolving threats, with a strong focus on how terrorists use digital tools such as social media, AI, encrypted platforms, crypto-assets, and drones. The plan combines stronger intelligence and Europol support, tougher enforcement of online content under the DSA, protection of public spaces and critical infrastructure, and closer international cooperation.

INTERPOL has launched a new global task force at the Global Fraud Summit 2026 as part of a more coordinated, data-driven response to the rapid global expansion of financial fraud. The task force is jointly developed by the UK’s Home Office and INTERPOL and is codenamed Operation Shadow Storm. The task force will target scam centres and their links to cybercrime and human trafficking, using tools such as stop-payment mechanisms and international intelligence-sharing networks. The initial focus of the task force will be dismantling criminal operations across Southeast Asia.

Simultaneously, major technology and consumer-facing companies, including Google, Amazon, Meta, and OpenAI, have signed the ‘Industry Accord Against Online Scams and Fraud’ at the Global Fraud Summit 2026. The companies pledged to focus on deploying proactive security measures and AI-driven detection systems; strengthening information sharing between industry and law enforcement to better identify and respond to fraud; enhancing resilience through advanced defensive technologies and rapid response mechanisms; and improving public education to help individuals recognise and avoid scams.

The EU has been unable to reach an agreement on extending temporary rules that allow online platforms to detect child sexual abuse material, leaving the current framework set to expire in April. The existing rules, in place since 2021, permit technology companies to voluntarily scan their services for harmful content, supporting efforts to identify and remove illegal material. But negotiations between the European Parliament and member states stalled over key issues — especially whether such measures should apply to encrypted services. Attention now shifts to the long-delayed permanent framework (the Child Sexual Abuse Regulation).

Brazil has started enforcing a new law aimed at strengthening protections for children online, marking a significant shift in how digital platforms are regulated in the country. The legislation, known as ECA Digital, introduces obligations such as age verification, stricter content moderation, and mechanisms to remove harmful material involving minors without requiring a court order. The law also targets platform design, requiring companies to limit features that may encourage compulsive use among children, such as excessive notifications, profiling for targeted advertising, and design elements that prolong user engagement. The law allows authorities to impose warnings and fines of up to $10 million for violations. In severe cases, courts may order the suspension or banning of platforms operating in Brazil.

Indonesia’s Communication and Digital Affairs Minister signed a government regulation that means children under 16 can no longer have accounts on high-risk digital platforms. This will reportedly include YouTube, TikTok, Facebook, Instagram, Threads, X, Bigo Live and Roblox. Implementation of the regulation will begin gradually from 28 March.

In Ecuador, the issue is framed in terms of security. A proposed ban on under-15s is linked to concerns that platforms are being used by criminal groups to contact and recruit minors. This shifts the rationale away from well-being and toward crime prevention, positioning social media restrictions as part of a broader security response.

A proposed social media ban for under-16s has been rejected by UK MPs, with 307 voting against and 173 in favour. However, a government-backed pilot is trialling different forms of restriction—full bans, time limits, and curfews— for six weeks. Participants will be interviewed before and after the trial to assess behavioural and practical outcomes, including how easily restrictions can be enforced and whether teenagers attempt to bypass controls.

Australia’s eSafety Commissioner states that platforms have removed or restricted millions of under-16 accounts under the country’s social media age ban, but serious compliance problems remain, including weak age-assurance systems and reporting tools that are hard for parents to use. Investigations into five major platforms are continuing, with enforcement decisions expected by mid-2026.

Austria plans to ban social media use for children under 14, joining a broader international move toward stricter youth online-safety rules. The government says the measure is meant to protect children from addictive platform design, violence, misinformation, and harmful beauty standards, and it also plans to add a new school subject on media and democracy to strengthen digital literacy.

France is considering a new law to ban social media for children under 15, while also proposing a digital curfew for older teens and extending school phone restrictions to high schools. The regulation reflects a broader push for stronger regulation of online harms affecting young people, including cyberbullying, harmful content, and excessive screen exposure, and aligns with similar child-safety measures already seen in countries such as Australia.

A Swiss survey found strong public mistrust of major tech companies such as Google, TikTok, and Meta, with most respondents viewing them as profit-driven, politically influential, and a source of dependence on foreign powers. At the same time, a majority still sees digitalisation as broadly positive, but wants the state to play a stronger role in ensuring that AI, algorithms, and digital platforms do not harm democracy or society.

Australia has begun enforcing new online child-safety rules that require platforms, including social media, app stores, gaming services, search engines, pornography sites, and AI chatbots, to use age-assurance measures and block minors from harmful or explicit content, including sexual and self-harm-related chatbot interactions. The eSafety Commissioner oversees the rules, and companies can face penalties of up to AUD 49.5 million per breach for non-compliance.

European Commission President Ursula von der Leyen convened the first meeting of the Special Panel on child safety online, announced in her 2025 State of the Union address. The panel will provide expert guidance on protecting and empowering children online and explore potential harmonised age limits for social media access. The panel aims to present a report with recommendations to the Commission President by summer 2026.

Economic

The EU and Canada have begun negotiations on a Digital Trade Agreement to expand the digital side of their existing trade relationship, aiming to set clearer rules for cross-border digital commerce. The talks cover issues such as paperless trade, recognition of e-signatures and digital contracts, no customs duties on electronic transmissions, and limits on data-localisation and forced source-code transfer requirements, while still preserving governments’ ability to regulate the digital economy.

The EU and Australia have deepened ties through a new Security and Defence Partnership, the conclusion of free trade agreement negotiations, and the launch of talks on Australia’s accession to Horizon Europe. Together, these moves are meant to expand cooperation on cybersecurity, crisis response, AI and other emerging technologies, data flows, critical raw materials, and trade, signalling a broader strategic alignment beyond economics alone.

Australia is moving toward a national licensing regime for crypto exchanges and tokenisation platforms under its financial services framework, following a Senate committee’s recommendation to pass the Digital Assets Framework Bill 2025. The proposal would bring more of the crypto sector under formal regulation, though industry groups warn that broad definitions could unintentionally capture some infrastructure providers and wallet-related services.

Meta has announced that third-party AI chatbots will once again be allowed to operate through WhatsApp in Europe for a fee, reversing earlier restrictions that limited access to rival chatbot services on the platform. Under the new arrangement, companies will be able to distribute general-purpose AI chatbots via the WhatsApp Business API for 12 months. The change is intended to give European regulators time to complete their investigation while allowing competing AI services to operate within the platform ecosystem.

Google will overhaul its Play Store policies after settling a long-running dispute with Epic Games, creator of Fortnite. The changes include lowering in-app purchase commissions to 20%, adding a 5% fee for developers using Google’s billing system, and reducing subscription fees to 10%, alongside making it easier to install alternative app stores on Android. As part of the deal, Epic will return Fortnite to the Play Store while continuing to develop its own Android app store.

The WTO meeting ended without agreement on extending the e-commerce duty moratorium, while a group of members advanced a separate digital trade arrangement. Read more in our dedicated text.

The ECB has launched Appia, a roadmap for developing Europe’s tokenised financial markets, with Pontes as a DLT-based settlement solution linking tokenised-market infrastructure to the Eurosystem and enabling pilots from Q3 2026. The plan is meant to support the shift from traditional finance to tokenised markets while preserving financial stability, central bank settlement, and interoperability, and it is now open for public consultation.

A joint ILO–World Bank study finds that AI will affect jobs unevenly across 135 economies. Advanced economies face higher exposure, especially in clerical and professional work, while developing countries risk disruption without comparable productivity gains because they often lack the infrastructure, internet access, and skills needed to benefit. The report argues that outcomes will depend less on AI alone than on connectivity, training, job design, and social protections.

Legal

The World Data Organisation (WDO) was launched in Beijing as a new international non-profit platform focused on global data development and governance, with the stated aim of narrowing the global data divide, supporting the digital economy, and improving international cooperation on issues such as cross-border data flows, privacy, and security. The initiative reflects a broader push to make data governance a more structured part of global digital policymaking.

A Luxembourg court has annulled Amazon’s €746 million GDPR fine, not because the alleged privacy violations disappeared, but because it found the regulator’s penalty process was flawed, especially in how Amazon’s level of fault was assessed. The case will now return to Luxembourg’s data protection authority for reassessment.

Italy’s data protection authority has fined Intesa Sanpaolo €31.8 million after an employee repeatedly accessed thousands of customer accounts without authorisation, and the bank failed to detect it in time. Regulators said the case exposed serious weaknesses in internal monitoring, risk controls, confidentiality safeguards, accountability, and breach notification.

Development

Malta has launched the SMART Food project, a Malta–Italy initiative using AI and blockchain to build a digital platform that tracks food products from production to consumption. The aim is to improve traceability, transparency, safety, sustainability, and trust in the agri-food sector, while helping consumers and producers access real-time product information.

China has revised its rules for the 2026 national agricultural census, expanding the census to cover not only agriculture but also rural industrial development and village construction, while introducing new data-collection methods such as remote sensing. The updated rules also tighten data-quality controls, confidentiality obligations, and penalties for falsifying statistics, reflecting a stronger emphasis on both broader rural data collection and stricter state oversight.

The UK and the Philippines have agreed on a new partnership to expand digital education and edtech cooperation, combining UK expertise and investment support with Philippine education priorities. The initiative focuses on improving access to digital learning tools, skills development, and education technology, while strengthening broader bilateral ties in innovation and capacity-building.

Sociocultural

The first transparency reports under the EU’s Digital Services Act-linked Code of Conduct on Disinformation have been published, with signatories including major platforms and civil-society actors outlining measures they say they are taking against disinformation, especially around the war in Ukraine and election integrity. Their significance is that these are the first reports since the Code gained formal recognition under the DSA in February 2025, marking a shift from a mainly voluntary scheme to a more structured co-regulatory system based on commitments, reporting, and auditing.

The EU is reviewing X’s proposal to change its blue-check verification system after finding that paid verification without meaningful identity checks could mislead users under the Digital Services Act. X had been fined €120 million in December and given 60 working days to submit corrective measures, which the Commission is now assessing while the company also challenges the decision in court.

UNESCO has launched a South Africa-focused research initiative on the governance of harmful online content under its Social Media 4 Peace programme, supported by the EU, to study hate speech, disinformation, regulatory gaps, and platform governance. The aim is to produce practical, rights-based recommendations that strengthen digital governance, platform accountability, freedom of expression, and access to information in the local context.

Spain has launched HODIO, a digital tool to measure hate speech across social media. Combining AI, data analysis, and expert review, it will publish biannual reports ranking platforms by users’ exposure to harmful content, aiming to inform policymaking and pressure companies to act. However, critics have raised concerns about the transparency of HODIO and how authorities will define and classify hate speech, warning that poorly defined criteria could infringe on freedom of expression.

AI governance in March

National frameworks, strategies and guidelines

USA. The US government has unveiled a National AI Policy Framework outlining a comprehensive strategy for AI across federal agencies. The policy sets priorities for responsible AI development, data governance, workforce training and international collaboration, while emphasising ethical safeguards, public‑interest outcomes and national security. The framework also calls for accelerated investment in AI research and deployment, alongside coordinated oversight mechanisms to ensure transparency and accountability in federal AI systems.

Egypt. On 14 March 2026, Egypt published the National Guidelines for Trustworthy and Responsible AI. The Guidelines provide a national reference for the responsible development, deployment, and oversight of AI across public and private sectors, ensuring AI use is safe, ethical, and transparent while supporting innovation aligned with Egypt’s Vision 2030 and the National AI Strategy. Complementing the National AI Governance Framework, which defines what should be governed, these Guidelines specify how to comply, offering methodologies, metrics, and checklists to operationalise ethical principles. Targeted at data scientists, compliance officers, and developers, they provide actionable directions to protect individual rights, promote societal well-being, enhance accountability and transparency, and foster innovation grounded in safety. The guidelines also align Egypt with international standards and engage government entities, private enterprises, and community actors in responsible AI governance.

South Korea. South Korea has unveiled a national strategy to become one of the world’s top three AI powers by 2028. The plan combines investment in digital infrastructure, data systems and next-generation connectivity. Authorities aim to expand networks by advancing 5G capabilities and preparing for the commercial deployment of 6G by 2030. Cybersecurity and data integration are also key priorities to support a stronger digital ecosystem. The strategy includes developing talent across education levels and investing in core technologies such as semiconductors and quantum computing. AI adoption is expected to expand across sectors, including manufacturing, healthcare and agriculture.

Sovereignty

The EU. Tensions are emerging in the EU over AI infrastructure investment, with France, Poland, Austria, and Lithuania pushing to reserve part of the €20 billion AI Gigafactory project for European technologies, while Germany is sceptical about linking the project to digital sovereignty goals. Meanwhile, Germany is pursuing a major expansion of domestic data centres and AI processing power, supported by regulatory reforms, tax incentives, and land allocation to attract investment, aiming to reduce reliance on foreign providers.

Russia. The Russian government is proposing rules that could ban or restrict foreign AI tools such as ChatGPT, Claude and Gemini if they fail to store Russian user data domestically and comply with Moscow’s regulatory requirements. The proposals, from the Ministry for Digital Development, aim to extend Russia’s push for a sovereign internet, protecting citizens from ‘covert manipulation’ and enforcing ‘traditional Russian spiritual and moral values.’ Under the draft rules, cross-border AI systems that transmit user data abroad would face restrictions, whereas foreign models that can operate entirely within Russian infrastructure, such as Qwen or DeepSeek, could be deployed safely.

Content policy

The EU. The European Commission has released a second draft of its Code of Practice on marking and labelling AI-generated content, part of efforts to help companies comply with transparency requirements under Article 50 of the EU Artificial Intelligence Act. Section 1 of the code focuses on providers of generative AI systems and proposes a multi-layered approach to marking AI-generated content, including digitally signed metadata, imperceptible watermarking, and optional fingerprinting or logging. Providers are also expected to make detection tools available so users and authorities can verify whether content was generated or manipulated by AI. Section 2 addresses deployers of AI systems, requiring clear disclosure when deepfakes or AI-generated text intended to inform the public have been artificially generated or manipulated, using visible and accessible labels.

The European Council has endorsed proposals to ban AI from generating non-consensual sexual content (CSAM), adjust high-risk AI compliance timelines, and streamline the AI Act, including exemptions for some SMEs, registration requirements, and clarified oversight responsibilities. These moves reflect Europe’s broader effort to secure sovereign AI infrastructure and ensure safe, accountable AI deployment.

Netherlands, France. A Dutch court has ordered xAI and its Grok chatbot not to create or distribute non‑consensual sexual images. The judgement requires Grok’s operators to implement technical measures to block prompts or outputs capable of producing non‑consensual intimate imagery. The decision was framed as a necessary enforcement of personal rights and dignity in the digital age, setting a potentially influential precedent for European courts grappling with AI‑generated harm.

Meanwhile, the Paris prosecutor’s office said that the controversy surrounding sexually explicit deepfakes generated by Grok may have been deliberately amplified. The alleged reason was to artificially boost the value of X and xAI ahead of June 2026, when the new entity created by the merger between SpaceX and xAI is planned to be listed on the stock market.

Security

Australia. The eSafety Commissioner found that AI companion chatbots, including Character.AI, Nomi, Chai and Chub AI, are failing to protect children from harmful content, with weak safeguards against sexually explicit material and child sexual exploitation. Most platforms relied on self-declared age verification, lacked meaningful monitoring of AI inputs and outputs, and did not consistently provide links to crisis or mental health support. Commissioner Julie Inman Grant warned that as children increasingly use AI companions for emotional support, the absence of robust safety measures on self-harm, suicide and unlawful content poses serious risks, with non-compliance subject to civil penalties under Australia’s Age-Restricted Material Codes.

The UK. Secretary of State for Science, Innovation and Technology has called on online service providers to strengthen measures against digital harms targeting women and girls, as part of a commitment to halve such violence within a decade. The secretary called on tech companies to implement Ofcom’s guidance ‘A Safer Life Online for Women and Girls’, which outlines steps such as conducting risk assessments focused on women and girls, pre-launch abusability evaluations of features, strong default privacy settings, demonetising content promoting abuse, limiting the visibility of misogynistic content in search and recommendation feeds, and implementing rate limits to curb coordinated harassment. The guidelines should be implemented by the end of 2026 at the latest.

The USA. The US government is facing two lawsuits from AI firm Anthropic after the Pentagon designated the company a supply-chain risk, effectively barring its technology from defence contracts.

The Department of Justice argues the designation is lawful and grounded in national security, citing Anthropic’s refusal to allow its AI to be used for autonomous weapons and domestic surveillance. Anthropic, in turn, claims the move is unlawful and retaliatory, targeting its policy positions rather than any genuine security risk.

In the California case, a federal judge has temporarily blocked the government from enforcing the designation. The court found that Anthropic’s conduct does not meet the legal threshold under Section 3252, which is limited to covert adversarial threats such as sabotage or system subversion—not public stances or contract disputes. The ruling also highlights procedural failures, including insufficient risk assessment, lack of interagency consultation, and failure to consider less restrictive measures.

The judge further raised constitutional concerns, noting the designation may have been influenced by Anthropic’s speech and that the company was likely denied due process. Evidence of immediate and significant harm—lost contracts, reputational damage, and disrupted business relationships—justified granting a preliminary injunction, though a final ruling may take months.

In parallel, Anthropic is pursuing a second case in Washington, D.C., challenging its supply chain designation before a three-judge panel at the D.C. Circuit Court of Appeals, specifically contesting the legal authority invoked under the Federal Acquisition Supply Chain Security Act (FASCA).The legal dispute has drawn support from across the tech sector, with companies including Microsoft, Google, Amazon and OpenAI backing Anthropic’s legal challenge through amicus filings. Industry leaders warn that the government’s designation could set a precedent that destabilises the US AI ecosystem and disrupts suppliers working with both government and private-sector AI systems.

Pay to think: Intelligence on a meter

‘We see a future where intelligence is a utility, like electricity or water, and people buy it from us on a meter and use it for whatever they want to use it for,’ Sam Altman, CEO of OpenAI, recently stated.

On the surface, this could sound like a vision of empowerment: on-demand access to superhuman reasoning, available to anyone with enough money to buy it. But Altman’s metaphor is precise. Utilities are not owned by the public; they are controlled by powerful providers who set the rates, terms, and infrastructure.

Our knowledge is already becoming commodified by tech companies and the advertising industry. But what OpenAI’s CEO suggests is a world in which intelligence itself is outsourced to a handful of platforms.

The AI monopolisation of intelligence challenges one of the pillars of civilisation built over millennia: That knowledge defines what it means to be human.

Altman is therefore not just describing a business model; he is also outlining a new social order, one in which intelligence is centralised, privatised, and sold back to humanity by major AI companies.

Not an inevitable future. The battle for human intelligence and knowledge – for who owns the capacity to think, to know, to decide – is not yet over.

The real alternative to monopolising and metering our knowledge back to us isn’t no AI; the real alternative is to have AI as an extension of our personal knowledge shared communities, countries, and humanity, as per our preferences.

Communities, universities, companies, and countries can build bottom-up AI rooted in their own languages, values, and knowledge systems. Open-source models have made human-centred AI technically possible and financially affordable. This would lead to a distributed ecosystem in which AI strengthens human communities rather than subordinates them.

This text is an adaptation of Dr Jovan Kurbalija’s blogpost ‘The war we’re not watching: The fight for the future of human knowledge.’

From content to design: Juries signal new era of accountability for tech giants

Two recent US jury verdicts are beginning to redraw the boundaries of responsibility for social media platforms, with implications that extend well beyond the individual cases.

In New Mexico, a jury ordered Meta to pay $375 million after finding it misled users about the safety of its platforms for children. The lawsuit, brought by Attorney General Raul Torrez, accused Meta of violating the state’s consumer protection laws by misrepresenting how safe its platforms are for minors while building features and algorithms that, in prosecutors’ view, entice prolonged use and expose children to significant risks. Those risks include addiction-like engagement, exposure to harmful sexual content, unwanted private communications with adults, sleep disruption from compulsive use, and environments where predators can operate with relative ease. Jurors were presented with internal research and testimony from former employees, including whistle-blower Arturo Béjar, suggesting the company was aware of these risks but failed to adequately warn the public or mitigate harm. Meta has rejected the verdict and plans to appeal.

Simultaneously, a Los Angeles jury reached a related conclusion in a different context. It found Meta and YouTube—owned by Google—negligent in the design and operation of their platforms in a case focused on social media addiction. The lawsuit, brought by a young woman identified as K.G.M., argued that compulsive use of these platforms during her teenage years contributed to depression, anxiety, and body dysmorphia. The jury agreed, awarding $6 million in damages and assigning 70% of the liability to Meta and 30% to Google. Both companies have said they will appeal, maintaining that mental health outcomes cannot be attributed to a single platform.

Why does it matter? The financial penalties in these cases are small for companies of this scale. The broader significance of the verdicts lies elsewhere.

Historically, platforms have relied on legal protections—most notably Section 230 of the US Communications Act—to shield themselves from liability for user-generated content. These rulings, however, begin to test a different theory: that liability can arise not just from what users post, but from how platforms structure, recommend, and amplify content.

This distinction matters because it targets the core of the modern social media business model. Platforms like Meta and Google are built around maximising user engagement—time spent, interactions, and content consumption—which in turn drives advertising revenue. To achieve this, they rely on recommendation systems, frictionless interfaces, and behavioural design features such as autoplay, infinite scroll, and push notifications. These are not incidental elements; they are foundational to how platforms retain users and monetise attention.

The emerging legal argument is that some of these design choices may actively contribute to harm, particularly for minors. In the New Mexico case, the focus was on exposure to harmful and exploitative content. In Los Angeles, the emphasis was to compulsive use and its mental health effects. But both cases converge on a similar point: that platform architecture itself—not just isolated content failures—can create foreseeable risks.

If this reasoning gains traction in courts, it introduces a new kind of pressure on technology companies. The issue is not the size of any single fine, but the cumulative effect of thousands of similar lawsuits, rising compliance costs, and the possibility of precedent-setting rulings that reshape acceptable design practices. Engagement-maximising systems, long treated as a competitive advantage, could become a source of legal vulnerability.

That creates a structural tension. Reducing harmful outcomes may require dialling back precisely those features that make platforms so effective at capturing attention. Even modest declines, when applied across billions of users, can translate into significant revenue impacts.

The path forward. Companies are unlikely to abandon their core models outright. A more probable response is adaptation. This could include re-optimising algorithms toward safer forms of engagement, segmenting products by age with stricter defaults for minors, and investing in more robust safety and audit mechanisms. There may also be a gradual shift toward alternative revenue streams—such as subscriptions, creator monetisation, or commerce integrations—to reduce reliance on pure attention-based advertising.

Legal strategy will also play a role. Both Meta and Google are appealing these verdicts, and future rulings will determine how far courts are willing to go in attributing harm to design choices. Companies are likely to strengthen disclosures, expand parental controls, and document internal risk assessments to demonstrate due diligence. Such measures may not eliminate liability, but they can shape how responsibility is interpreted.

Ultimately, the key question is whether these cases represent isolated outcomes or the beginning of a broader legal shift.

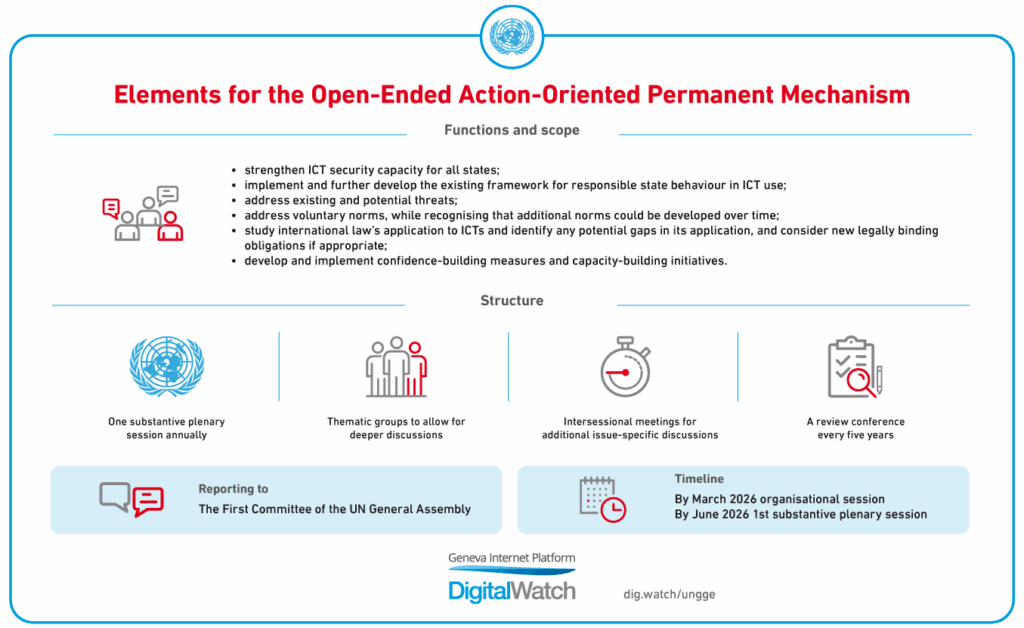

UN kicks off Global Mechanism on ICT security, road ahead murky

After almost three decades of stop-start cybersecurity negotiations at the UN, the long-anticipated Global Mechanism on ICT security has finally kicked off.

It is the first permanent forum of its kind since discussions on ICT security began back in 1998, and its mere existence says a lot about how far those talks have come.

But if the launch felt like a breakthrough, the organisational session quickly brought things back down to earth. Beyond what was already sketched out in Annex C and the OEWG’s Final Report, it remained unclear how the Mechanism would actually organise itself in practice.

The session raised plenty of questions—about structure, priorities, and process—but offered few real answers, leaving the sense that while the Mechanism now exists, what it will do and how it will do it is still very much up for grabs.

A new body, a new mandate, and a newly elected Chair, Egriselda López of El Salvador, injected renewed optimism into the Global Mechanism’s first organisational session. Yet, within minutes, it became evident that the Global Mechanism did not start with a blank slate, but rather inherited the OEWG’s long list of disagreements.

Russia opened the discussion by disputing the legitimacy of the Chair nomination, which they claimed was guided solely by the UNODA and thus limited state participation in the process. They used this opportunity to stress that all decisions under the new process must be based on consensus and be completely intergovernmental.

The substantive issues on the agenda

For the provisional agenda of the mechanism’s July session, the Chair circulated a draft agenda organised around the five pillars of the framework for responsible state behaviour in the use of ICTs. However, Iran and Russia argued that the wording of agenda item 5 did not precisely reflect paragraph 9 of Annex C of the OEWG final report and called for correction at this session. The EU and Canada rejected this, arguing the draft already referenced all relevant documents and that isolating one paragraph would itself constitute renegotiation. The USA reserved its position entirely, preferring that the July plenary adopt its own agenda. No consensus was reached, and the Chair will continue consultations before July.

The mechanism inherited many unresolved substantive debates from its predecessors.

On international law, there is widespread agreement that considerable work remains to be done, but little agreement on how to carry it out. The majority of delegations have shown clear support for strengthening the existing normative framework and reaffirming the UN Charter’s application to cyberspace.

A broad majority of states expressed support for ensuring that the mechanism remains action-oriented, with a strong focus on practicality and the implementation of agreed frameworks on international law, norms, CBMs, and capacity-building (Chile, Nauru, Portugal, Switzerland, the United Kingdom, Estonia, Italy, Australia, the Democratic Republic of the Congo, Antigua and Barbuda, Sudan, Vanuatu, Albania, Vietnam, India, Greece, Rwanda, the Dominican Republic, North Macedonia, Kiribati).

In particular, some delegations advocated for applying the framework to concrete scenarios as a way to stimulate implementation (Japan, the Netherlands, the United Kingdom, Sudan). China was the only delegation to emphasise that further development of the framework is equally important alongside its implementation.

The EU highlighted the norm checklist, a hotly debated issue in the previous mechanism, as an area for further improvement.

However, to many states, a fundamental concern remains. Capacity building initiatives risk stalling without reliable funding, so many delegations, primarily from developing countries, urged the Global Mechanism to prioritise the operationalisation of the UN Voluntary Fund, which was tabled but left unresolved by the OEWG.

Dedicated thematic groups: Who, what and how

The often broad agenda and long-winded statements of delegations in OEWG plenary sessions left little room for technical depth, leaving many delegations frustrated with the gap between consensus language and concrete action.

The Dedicated thematic groups (DGTs) were created to address this issue precisely by setting up an informal, technical forum to advance practical initiatives already agreed on, such as the Global ICT Security Cooperation and Capacity Building Portal. However, the practicalities on how they should be set up and administered are going to be hotly contested as it will influence what gets on the agenda, who drives it, and whether this new system is capable of delivering real outcomes over time.

Who will lead DTGs?

The dominant and most contested question of the session was who would appoint the co-facilitators for the two Dedicated Thematic Groups. The Chair proposed appointing two co-facilitators per DTG: one from a developed country, one from a developing country, drawing on GA practice, under which the Chair appoints co-facilitators for intergovernmental processes. She indicated her intention to hold broad informal consultations before making appointments, and committed to geographic balance, gender parity where practicable, and relevant technical expertise as selection criteria.

Who ends up in these roles matters considerably: the co-facilitators will steer the DTG discussions, shape their agendas, and channel recommendations to the plenary.

A broad coalition of states supported the Chair’s approach, including the EU, speaking on behalf of its member states and several aligned countries such as France, Germany, Australia, the United Kingdom, the Netherlands, Switzerland, Japan, Egypt, Senegal, Nigeria, Malaysia, Moldova, and others. Egypt and Senegal were among the most direct, noting that delays in operationalising the mechanism would waste the intersessional period and erode its credibility, particularly for developing countries eager to move from procedure to substance.

Another group of states, led by Russia and supported by Iran, China, Belarus, Nicaragua, and Cuba, argued that co-facilitator appointments must be approved by member states by consensus rather than made unilaterally by the Chair. Russia contended that DTG co-facilitators handle substantive political matters and therefore constitute officials whose appointment requires a collective agreement. Russia also raised a geographic argument: assigning one developed-country and one developing-country co-facilitator per DTG still disproportionately favours developed states, which represent less than one-fifth of UN membership. Iran added that the early OEWG draft text had explicitly authorised the Chair to appoint DTG facilitators, but that this provision was deliberately removed during negotiations, signalling a lack of agreement on the matter.

The Chair affirmed her intention to consult all member states informally before presenting candidates and called on delegations to show flexibility given the urgency of getting the mechanism’s work underway. Russia subsequently stated its understanding that candidates would be determined through broad consultation, followed by consensus-based approval, but the Chair neither confirmed nor rejected this interpretation.

The question is effectively deferred to the intersessional period, meaning the composition of the DTG leadership teams remains unresolved and will require continued diplomatic engagement before July.

What will DTGs discuss?

A closely related debate concerned who decides what the DTGs will actually discuss. Several Western and like-minded delegations (e.g., Germany, France, Canada, the United Kingdom, and Australia) highlighted that it is a prerogative of the Chair and co-facilitators, to be exercised in close consultation with states. These delegations proposed ransomware and critical infrastructure protection as natural starting points, citing their frequency across national statements and OEWG discussions.

Iran and Russia emphasised that topics must be determined by consensus among all member states. Argentina argued that the plenary should maintain control over the agenda rather than ceding too much responsibility to the co-facilitators.

Morocco instead advocated a bottom-up model in which DTGs define their own priority subtopics from the start, based on member states’ expressed preferences to maintain regional balance and ownership.

In this sense, the DTGs’ credibility hinges on a delicate balance, having to be ambitious enough to move conversations into action but also focused enough on issues with broad support so that their outputs survive in plenary.

No decision was taken. For industry and civil society organisations with specific thematic priorities, this remains an active opening: states are currently receptive to input on which topics the DTGs should prioritise.

Colombia put forward a process proposal that drew broadly positive reactions across delegations. It recommended that:

- DTG mandates be time-limited with clearly defined and measurable outputs;

- DTG 1 addresses specific rotating subjects rather than its entire mandate simultaneously, and

- DTG outputs systematically distinguish between recommendations on which consensus exists and those still under development.

Senegal made a complementary point: reports should document both areas of agreement and divergence, preserving a record of discussions even when no consensus was reached. Both proposals reflect a wider concern that, without structured outputs and clear timelines, the mechanism risks reproducing the open-ended deliberation of the OEWG without generating implementable results.

How will DTGs feed into the plenary?

Another issue discussed was how DGT work feeds into plenary work. Brazil made it clear that without a defined protocol for elevating DTG reports to the plenary and formally accepting their recommendations, the groups risk becoming talking shops that are disconnected from the mechanism’s official conclusions. Their proposed solution, which still has to achieve support, is to keep DGT conversations primarily informal but include a short formal section for decision-making.

Stakeholder participation

A long-standing point of contention and possibly the most politically-charged was the role of non-governmental actors in the groups. The effective participation of interested stakeholders remains uncertain.

Some delegations adopted a more accommodating stance, recognising that stakeholders can enhance the quality of deliberations (Sudan, Antigua and Barbuda) and contribute to more practical outcomes (Vietnam, Dominican Republic), while underscoring the importance of preserving the intergovernmental nature of the process (Sudan, Vietnam).

Canada and like-minded states argued that the July 2025 consensus clearly provides for states to nominate experts for DTG briefings and for the wider stakeholder community to participate throughout DTG discussions.

Iran contested this, asserting that stakeholder modalities agreed for the mechanism apply equally to DTGs. Russia also argued that expert briefings from external stakeholders are a possibility rather than a standard feature, and that inviting external briefers requires member-state agreement on a case-by-case basis.

How this is resolved will directly determine the degree of access the private sector, technical community, and civil society organisations have to the DTG process in practice.

What’s next?

The session closed without resolution on its two most consequential questions: co-facilitator appointments and the provisional plenary agenda. The Chair will convene informal intersessional consultations on both and issue a programme of work document before July in all UN languages.

The Secretariat will open an annual stakeholder accreditation window in the coming weeks; stakeholders wishing to participate in plenary sessions and review conferences can monitor the Digital Watch Observatory web page, where we track the process, for details.

The broader tension remains unresolved, and how it is managed in the intersessional period will largely determine whether the July plenary can open with the mechanism’s operational foundations in place.

The Chair also confirmed the two key dates for 2026:

- the substantive plenary session scheduled for 20-24 July, and

- the DTG meetings scheduled for 7-11 December, both at UN Headquarters in New York

For stakeholders tracking or seeking to contribute to these discussions, these are the dates to plan around.

Deadlock at WTO: Moratorium lapse meets plurilateral momentum

At the 14th Ministerial Conference of the World Trade Organization (MC14) in Yaoundé, Cameroon, digital trade dominated the agenda through two parallel tracks—each pointing in a different direction and illustrating both the limits and evolution of the multilateral system.

The moratorium on customs duties on electronic transmissions. The long-standing moratorium—renewed every two years since 1998—expired on 31 March after members failed to reach consensus on the length of a new extension, with differing views among members preventing a deal.

While some members, particularly the USA, sought a longer-term solution, others have traditionally advocated a shorter renewal period, reflecting a desire for caution given the rapid pace of technological change and the need to preserve policy flexibility for the future.

During MC14, Brazil was the leading voice, emphasising the importance of caution in light of developments such as AI and 3D printing, suggesting that a shorter extension with room for review would allow members to reassess as the digital landscape evolves. Efforts to find a middle ground ultimately fell short as time ran out.

The outcome also meant that a broader set of discussions on WTO reform, which had been politically linked to the approval of the moratorium, remained unresolved.

This is not the first time the moratorium lapsed; it happened at the 1999 Seattle ministerial, before the moratorium was reinstated at Doha two years later. The current expiry of the moratorium does not mean tariffs will automatically be imposed.

Still, it creates policy space for some countries to consider introducing tariffs if they are not bound by trade agreements that prohibit customs duties on electronic transmissions.

Plurilateral Agreement on E-commerce. In parallel, however, a different dynamic unfolded. A coalition of 66 WTO members announced they would move forward with implementing the plurilateral Agreement on Electronic Commerce concluded in 2024 by the Joint Statement Initiative on e-commerce (JSI), through interim arrangements.

Reminder: WTO Joint Statement Initiatives (JSIs) are a way for a group of World Trade Organization members to move forward on specific issues without waiting for the entire organisation to reach a consensus. They are open to any WTO Member.

Australia, Japan, and Singapore, serving as co-convenors of the JSI on e-commerce, confirmed that the pact, which aims to facilitate digital trade and prohibit duties on e-commerce transactions, will enter into force once 45 members have formally notified their acceptance.

What’s next for e-commerce discussions? Discussions on the moratorium, the WTO reform, and the future of the Work Programme on e-commerce (WPEC) are expected to continue at the next General Council meeting in May in Geneva.

In the meantime, JSI members will continue to seek inclusion of the Agreement under the WTO legal architecture.

The JSIs and their outcomes face opposition from a number of WTO members. The JSI themselves, these countries argue, lack legal status because they were not launched by consensus. Similarly, these countries claim that the outcomes of JIs are not based on consensus and are neither multilateral agreements nor plurilateral agreements as defined in Article IV of the agreement that established the WTO – the Marrakesh Agreement.

For instance, India registered dissent against the incorporation of the agreement achieved within another plurilateral negotiation, on Investment Facilitation for Development, into the WTO rulebook.

The country argued that incorporating such frameworks into the WTO rulebook risks eroding the organisation’s foundational principles. It asked for a discussion of guardrails and legal safeguards before integrating any specific plurilateral outcome into the WTO.

Last month in Geneva

The Data Technology Seminar 2026, organised by the European Broadcasting Union, took place from 10 to 12 March in Geneva. The event brought together media professionals and technology experts to discuss how AI and data systems are being developed, governed, and deployed in public service media. Sessions will explore topics such as AI strategy and governance, metadata platforms, hybrid search, audience personalisation, and the use of generative AI in editorial and production workflows.

The World Intellectual Property Organization (WIPO) launched the AI Infrastructure Interchange (AIII) on 17 March in Geneva and online. The programme included keynote remarks, panel discussions, and presentations addressing the role of technical collaboration between creators, rightsholders, and technology companies. Participants also discussed the objectives of the AIII initiative and the establishment of a Technical Exchange Network intended to support ongoing expert dialogue on practical challenges and opportunities.

The Geneva Graduate Institute organised a briefing lunch on 23 March to examine evolving transatlantic dynamics at the intersection of US politics and the global influence of major technology platforms. The discussion explored how recent political developments in the USA and the concentration of technological power shape Europe’s position, including questions of dependency, regulation, and strategic autonomy.

The International Labour Organization (ILO) hosted a session on the macroeconomic impacts of AI on 25 March, showcasing a new World Bank Group model that treats AI as a structural transformation of production. The tool simulates how AI adoption affects sectors, occupations, and prices, helping policymakers assess implications for growth, equity, and structural change. A first case study in Poland will explore its application, with potential use in other emerging and middle-income economies.

On 30 and 31 March, the International Telecommunications Union (ITU) held a two-day workshop on ‘Trustable and Interoperable Digital Identities for Human and Agentic AI’ in Geneva. It btought together stakeholders from governments, industry, academia, and standards bodies to examine technical approaches related to trust frameworks, trust management, security, and interoperability; and to investigate actionable recommendations and consolidated insights to advance standardisation work in the field.

The Inter-Parliamentary Union (IPU) hosted a webinar on ‘Building AI Literacy in Parliaments‘ on Wednesday, 1 April 2026, to explore how parliaments can develop training and resources to support AI literacy among members, parliamentary staff, and IT teams. The webinar will highlight the IPU Guidelines for AI in parliaments, emphasising that AI literacy should reach all roles within parliaments.