Dear all,

It’s back to AI regulation today – from a US executive order in sight to new AI voluntary rules and industry pleas for regulation. In other news, net neutrality is making a comeback in the USA, with the communications commission’s proposal to restore the 2015 rules that were repealed in 2017. Amazon has been sued for antitrust violations in the USA, but managed to obtain a temporary suspension of the EU’s Digital Services Act obligations (we’ll report on this once the court delivers its judgement).

Let’s get started.

Stephanie and the Digital Watch team

// HIGHLIGHT //

Biden confirms AI executive order is imminent

US President Joe Biden’s executive order on AI will be issued in the coming weeks, he confirmed during a meeting of the President’s Council of Advisors on Science and Technology in San Francisco last week.

The first time we heard about this was in July when Biden spoke of plans for new rules right after a meeting he had just held with AI companies. At the time, the president spoke of an executive order coming in summer, which was obviously delayed. It’s coming this autumn, he now said. But more than that, last week’s remarks offer new clues on what to expect.

Leveraging AI’s potential. Biden, a self-proclaimed AI enthusiast, said it would be a major failure if future generations looked back at our time and thought that we had the potential tools to explore and significantly increase our ability to help, and ‘we somehow messed it up’. One of the main focuses, therefore, is on harnessing the potential of AI for diverse areas such as research, healthcare, science, education, and more.

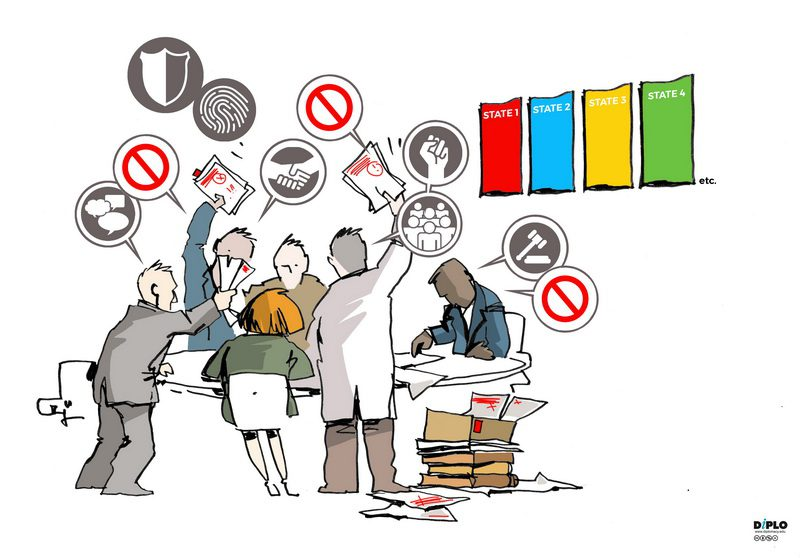

Protecting people from profound risk. Without clear rules of the road, Biden is wary that AI innovation could create serious dangers, hence the executive order’s strong emphasis on risk. But the fact that the leading 10 to 12 companies are developing AI tools with vast differences in their potential and risks creates a significant challenge for legislators: Which measures can tackle threats across the board in a way that doesn’t water down any regulatory action?

A good starting point. The AI Bill of Rights and other voluntary commitments already focus on safety, security, and trust, so the executive order will most likely build on them. In his address, Biden specifically referenced three voluntary measures companies are following, making it likely that these would be repeated in his executive action. The first would oblige companies to make sure AI is watertight before being released to the public. The second is the need for independent product testing: The White House has already supported red-teaming at DefCon, confirming this preference. The third is watermarking, which would address the ubiquitous issue of disinformation.

The path to bipartisan legislation. Biden also said his administration will ‘continue to work with bipartisan legislation’. Though the intention is there, a serious challenge here is time: Legislators are still working on fundamental questions, and the most we can expect is some narrow pieces of an AI regulatory regime in the current session of Congress.

A close ally: The UK. On the international front, Biden said the USA would work with international partners, singling out the UK, probably because it’s one of the few countries that is friendly to US-based AI companies. The UK’s upcoming AI Summit in November will focus primarily on managing the risks of frontier AI, that is, ‘highly capable general-purpose AI models that can perform a wide variety of tasks and match or exceed the capabilities present in today’s most advanced models’, according to the UK government’s pre-summit description released last week. If that sounds familiar, that’s precisely what OpenAI’s Sam Altman told US lawmakers recently.

A shift in focus. With AI companies calling for guardrails, legislators offering bipartisan support, and an executive order in sight, there’s a clear shift in focus in the USA. At home, the USA is shifting away from its traditional laissez-faire approach. The main question is, to what extent?

| Digital policy roundup (25 September–2 October) |

// AI GOVERNANCE //

UNGA78: Concerns over AI risks and use of lethal autonomous systems

Our coverage of the first week of the UN General Assembly’s general debate showed clearly that countries were worried about AI risks. What emerged during the second week was along the same lines: There’s an urgent need for global cooperation on how to navigate AI challenges.

A particularly worrying issue raised on the last day was the use of lethal autonomous weapons systems (LAWS) in armed conflict. A few countries said that until an international legal framework is put in place, LAWS should be banned.

Why is it relevant? International negotiations have been going on for years without much progress. Different countries have varying positions on LAWS: Some advocate for a complete ban, while others argue for strict regulations and safeguards. With AI risks on world leaders’ minds, there might be a better chance for the debate to move forward and reach a compromise.

New proposals. In parallel, the USA plans to propose international norms for the responsible military use of AI at the UN’s First Committee meeting in October. Costa Rica also said it was working on proposing a joint resolution with Austria and Mexico on autonomous weapons systems.

More resources. Read who said what at UNGA 78, and what they said about AI.

Canada launches voluntary AI code of conduct

Canada launched a new voluntary code of conduct for companies developing generative AI. The code includes measures for accountability, safety, fairness and equity, transparency, human oversight and monitoring, and validity and robustness. Signatories also commit to supporting the development of a responsible AI ecosystem in Canada and using AI to drive inclusive and sustainable growth while prioritising human rights, accessibility, and environmental sustainability.

Even though the code is voluntary and has been signed by OpenText, BlackBerry, and TELUS, among others, it has received some sharp criticism from Shopify CEO Tobi Lütke, who argued that Canada needs to focus on encouraging more innovation, not on regulating the sector.

Why is it relevant? Canada is following the recent trend of launching voluntary guidelines until legislation (in Canada’s case, the Artificial Intelligence and Data Act (AIDA), part of Bill C-27) is enacted.

Was this newsletter forwarded to you, and you’d like to see more?

// NET NEUTRALITY //

US FCC proposes restoration of net neutrality rules

The US Federal Communications Commission (FCC) is planning to restore the net neutrality rules that it introduced in 2015 (and which were repealed in 2017 under the previous FCC administration).

The announcement was made by FCC Chairperson Jessica Rosenworcel last week (watch or read), who said the FCC proposes to reclassify broadband under the so-called Title II of the US Communications Act. This would also reinstall the FCC’s authority to serve as a watchdog over the communications marketplace.

Why is it relevant? Net neutrality rules, which prevent ISPs from restricting or throttling internet access, have long been a major bone of contention. The 2015 Open Internet Order was a win for net neutrality proponents, but it didn’t last long. With a new majority of Democrat-appointed members on the commission, the current administration hopes to reinstate the original rules. The FCC will meet on 18 October: A vote in favour of this plan will kickstart the legislative process.

// ANTITRUST //

FTC sues Amazon over alleged anti-competitive practices

The US Federal Trade Commission (FTC) and 17 state attorneys general sued Amazon for alleged anti-competitive behaviour.

The claims. The FTC and the states say that Amazon abuses its monopoly (in the online superstore market, and the online marketplace services market) using tactics such as forcing sellers to use Amazon’s logistics services, often at inflated prices, and anti-discounting measures that punish sellers and deter other online retailers from offering prices lower than Amazon, keeping prices higher for products across the internet.

‘Amazon is now exploiting its monopoly power to enrich itself while raising prices and degrading service for the tens of millions of American families who shop on its platform and the hundreds of thousands of businesses that rely on Amazon to reach them’, said FTC chairperson Lina Khan.

Why is it relevant? First, it’s a sweeping lawsuit that goes beyond what Amazon faces in other cases. Second, judging by the FTC’s track record (it recently failed to block Microsoft’s acquisition of Activision, though to be fair, the FTC hasn’t given up), there’s a chance that it could emerge largely unscathed. What’s more is that Amazon does have a compelling argument of providing customers with a huge option of products, and of providing retailers access to a global market.

Case details: Federal Trade Commission et al v. Amazon.com Inc, US District Court for the Western District of Washington, 2:23-cv-01495

EU launches preliminary investigation into Nvidia’s possible AI chip market abuse

The European Commission has been informally collecting views on potentially abusive practices by Nvidia, which produces chips used for AI and gaming, Bloomberg revealed. This comes after France’s competition authority carried out an ‘unannounced inspection […] in the graphics cards sector’, which was revealed to involve Nvidia.

Nvidia, a major player in the sector for graphics processing units (GPUs), is the only trillion-dollar semiconductor firm in the world.

Why is it relevant? This marks the first antitrust probe linked to hardware services used for AI. But it’s still premature to say what will happen next. The aim of early-stage investigations is for the European Commission to understand if it needs to intervene with more formal procedures, so there’s no certainty whether this will escalate.

| The week ahead (2–9 October) |

Ongoing till 31 October: The European Commission, together with other EU institutions, kicked off the annual European Cybersecurity Month campaign, aimed at raising cyber awareness amid increasing concern about online safety.

8–12 October: The annual Internet Governance Forum (IGF2023) takes place in Kyoto, Japan, and online starting next Sunday. As usual, we’ll be on the ground and actively engaged with reporting, analysis, and workshops. Sign up for daily newsletters and download our app to stay up-to-date with session reports and other updates.

| #ReadingCorner |

Tech Policy Press has created an online tracker for updates on Senator Chuck Schumer’s ongoing AI Insight Forum series of events, including who’s attending and what topics were discussed.

Originality.ai, a site that provides detection services for AI-generated content and plagiarism, has another online tracker: It lists the ongoing copyright and trademark lawsuits against OpenAI and ChatGPT.

The 6th session of the Ad Hoc Committee on Cybercrime left many questions unanswered. Two of the most critical issues of contention, related to terminology and the proposed convention’s scope, remained unresolved. With only one session to go – in February 2024 – the pressure’s on. Read our key takeaways from the penultimate session.