10 – 17 April 2026

HIGHLIGHT OF THE WEEK

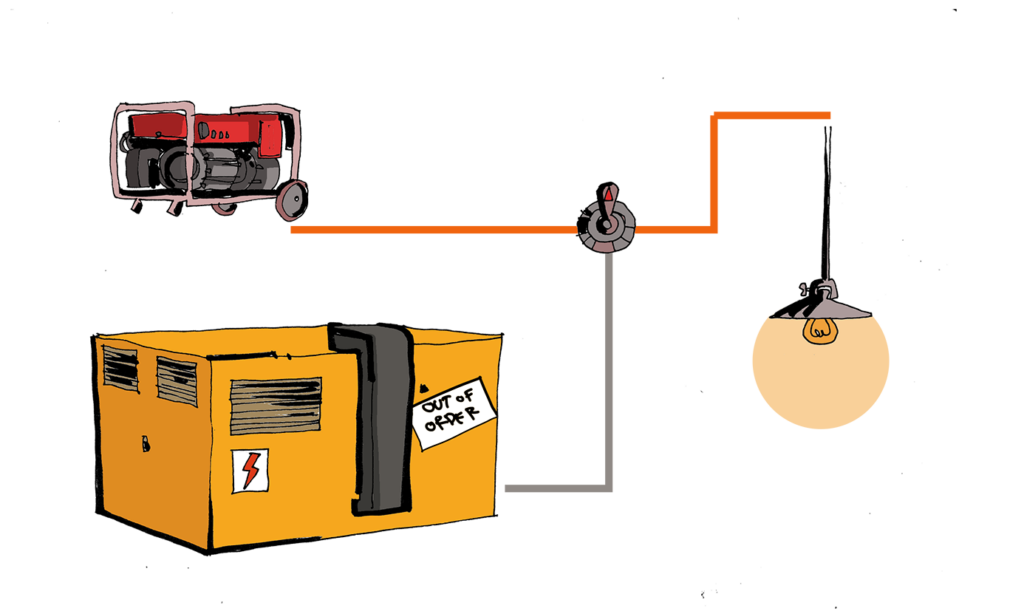

European firms build a digital ‘backup generator’

A group of European firms has unveiled what it effectively describes as a digital ‘backup generator’—a full-stack recovery system designed to keep critical services running in the event of access to foreign technology providers being disrupted.

Developed by Cubbit, Elemento, SUSE, and StorPool Storage, the so-called ‘Disaster Recovery Pack’ was launched on 15 April in Berlin at the European Data Summit hosted by the Konrad-Adenauer-Foundation.

The Pack bundles together storage, compute, orchestration, networking, identity, observability, and management into a pre-integrated, deployable system. Organisations can use the system to identify critical services, build a sovereign recovery setup, and shift key operations to a fully European stack in the event of disruption.

The aim is not to replace existing infrastructure outright, but to give organisations a ready-to-activate fallback environment—one that can be tested in advance and scaled progressively across workloads.

While the notion of a foreign vendor ‘kill switch’ remains contested, the underlying concern—loss of access to critical services due to external legal, political, or commercial decisions—has gained traction across European policy circles.

That concern is reinforced by market structure. US firms, including Google, Amazon Web Services, and Microsoft, continue to dominate Europe’s cloud ecosystem, while payment systems and software layers remain similarly concentrated.

In this context, resilience is increasingly framed not as full technological independence, but as the ability to withstand disruption without systemic failure.

Why does it matter? Initiatives like the Disaster Recovery Pack could form the backbone of a more resilient European digital ecosystem—one designed not to eliminate dependencies, but to manage them on Europe’s own terms.

IN OTHER NEWS LAST WEEK

This week in AI governance

South Africa. South Africa has unveiled a draft national AI policy proposing new institutions — including a National AI Commission, an AI Ethics Board and a regulatory authority — alongside incentives such as tax breaks and grants to boost local innovation. The plan aims to position the country as a continental AI leader while addressing governance, infrastructure and data sovereignty concerns.

Russia. Russia is advancing a draft AI regulatory framework that would formalise oversight of AI development and deployment, aligning with broader efforts to strengthen digital sovereignty and state control over emerging technologies. The proposals focus on risk management, national standards and reducing dependence on foreign AI systems, while supporting domestic innovation. The move fits into Moscow’s wider strategy of tightening control over digital infrastructure and cross-border data flows.

UNESCO — Latin America & Caribbean. UNESCO has launched a regional AI in Education Observatory for Latin America and the Caribbean, designed to support evidence-based policymaking and track the impact of AI on education systems. The initiative aims to build capacity, share best practices and guide responsible integration of AI tools in schools and learning environments.

Belgium. Belgium’s data protection authority has released a new information brochure titled ‘The Impact of Artificial Intelligence (AI) on Privacy’, providing guidance on risks such as bias, privacy violations and misuse of generative AI systems. The document is intended to raise awareness among organisations and the public, and to support compliance with EU data protection and AI governance frameworks.

Kazakhstan. Kazakhstan has introduced mandatory audits for high-risk AI systems, requiring developers to obtain a positive audit assessment before their systems can be listed as ‘trusted’ by sectoral authorities. The government will publish and regularly update official lists of approved systems, based on applications that include documentation on ownership, functionality and use conditions, reviewed within strict timelines. The move aims to build trust and standardise best practices in AI deployment, signalling a more structured and compliance-driven approach to high-risk AI governance.

Ghana. The Ghanaian Ministry of Communication, Digital Technology and Innovations has launched a public-sector AI capacity development programme in collaboration with the Government of Japan and the United Nations Development Programme. The programme is designed to equip public officials with knowledge of AI and its applications in governance. It focuses on improving decision-making and service delivery, drawing on experience from the UN and Japan.

EU develops age verification app

The European Commission has developed a standardised age-verification app intended to work across member states. The app allows users to confirm they meet age requirements to access social media platforms by providing their passport or ID number. It is designed to integrate into national digital wallets or operate as a standalone app, with a coordinated EU framework to ensure interoperability and avoid fragmented national systems.

The app is open source and available for both public and private implementation, but is subject to common technical and privacy requirements. The Commission plans to establish an EU-level coordination mechanism to oversee rollout, accreditation, and cross-border usability.

This technical and regulatory push is unfolding alongside political coordination among member states. Several member states are already preparing to integrate the app into national digital identity wallets, with France, Denmark, Greece, Italy, Spain, Cyprus and Ireland cited as front-runners. French President Emmanuel Macron is convening EU leaders, including Spanish Prime Minister Pedro Sanchez and representatives of Italy, the Netherlands and Ireland, to align national approaches to restricting minors’ access to social media and to press for faster EU-level action.

Yes, but. The rollout is already facing scrutiny. Shortly after Ursula von der Leyen described the app as technically ready and privacy-preserving, a security researcher claimed its protections could be bypassed in minutes. The critique points to structural design issues rather than isolated bugs. Reported weaknesses include locally stored authentication data that can be reset or modified, allowing users to bypass PIN protections, disable biometric checks, and reset rate-limiting mechanisms by editing configuration files. This effectively enables the reuse of verified identity data under altered access controls.

The criticism has triggered broader concerns among developers about the app’s architecture, including why secure hardware features were not used, and whether elements like expiring age credentials are logically necessary.

UK threatens jail for tech executives over non-consensual sex images removal

The UK government is planning measures that could make senior technology executives face criminal charges, including prison sentences, if their companies fail to remove non-consensual intimate images when required by regulators.

The move builds on existing obligations that already require platforms to take down such material within strict timeframes or face significant penalties, including fines of up to 10% of global turnover or even service blocking.

Why does it matter? The latest step goes further: instead of relying solely on corporate sanctions, it introduces personal criminal accountability at the executive level. This type of liability is likely to accelerate compliance in ways that financial penalties alone have not, and may serve as an example to other jurisdictions.

Zooming out. The policy is part of a broader tightening of the UK’s online safety framework, driven by persistent concerns over revenge porn and the rapid proliferation of AI-generated intimate imagery.

EU blocks Meta’s WhatsApp third-party AI access changes with interim antitrust measures

The European Commission has issued a supplementary charge sheet to Meta (called Supplementary Statement of Objections), outlining concerns over potential restrictions on third-party AI assistants’ access to WhatsApp.

Previously, Meta decided to reinstate access to WhatsApp for third-party AI assistants for a fee. However, the Commission has preliminarily found that these measures remain anticompetitive and has now issued interim measures to prevent these policy changes from causing serious harm on the market.

The interim measures would stay in effect until the Commission concludes its investigation and issues a final decision on Meta’s conduct.

LOOKING AHEAD

The 29th session of the Commission on Science and Technology for Development (CSTD) is scheduled to take place from 20 to 24 April 2026 at the Palais des Nations in Geneva, Switzerland.

For its 29th session, the programme will address the priority theme of ‘Science, Technology and Innovation in the Age of Artificial Intelligence’ and will also review progress in implementing and following up to the outcomes of the World Summit on the Information Society at regional and international levels.

The session will include presentations on technical cooperation activities and the work of the multistakeholder Working Group on Data Governance, as relevant to development objectives. Participation is expected from representatives of national governments, international organisations, civil society and the private sector.

READING CORNER

An analysis of why the EU AI Act’s high-risk obligations are delayed by 16 months and how US federal intervention is dismantling state-level AI safety laws, creating a global governance vacuum.