YouTube deepfake detection raises new legal risks for organisations

Rapid advances in AI deepfake detection are changing how courts and companies view digital evidence, raising new questions about responsibility when fabricated audio or video appears.

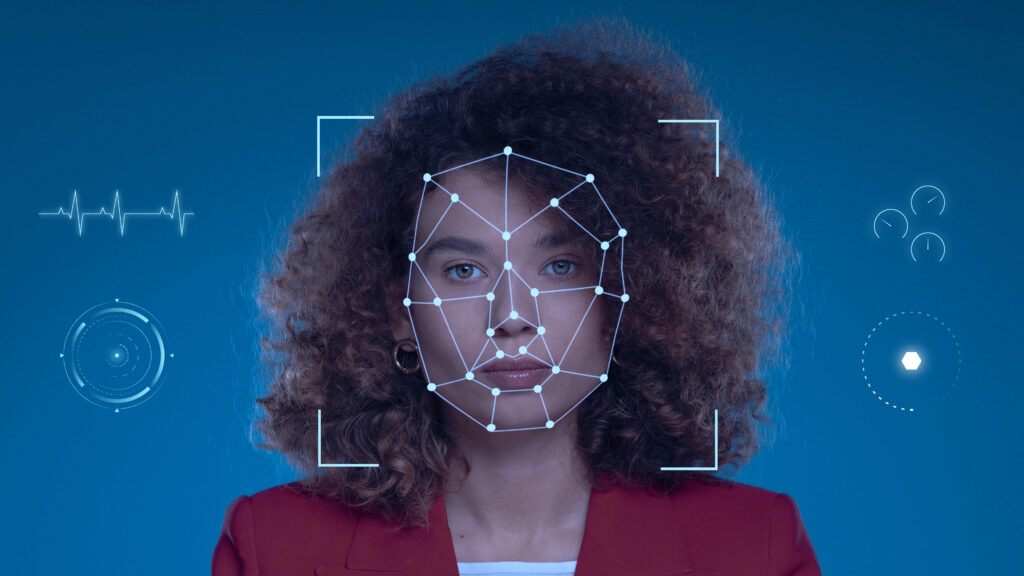

YouTube has expanded its AI likeness detection system to identify deepfakes targeting politicians, journalists and government officials. The tool functions similarly to a facial version of Content ID, comparing uploaded videos with verified identity profiles to flag manipulated content.

Such large-scale deployment demonstrates that deepfake detection is technically viable, potentially shifting expectations for organisations that handle audio and video evidence. Legal experts suggest the emergence of proven detection tools could influence how courts evaluate negligence and ‘duty of care’ in cases involving synthetic media.

Research shows many deepfake detection systems struggle outside controlled laboratory environments. Real-world attacks have already caused significant financial damage, including a case where a deepfake video call impersonating executives led to a $25 million fraudulent transfer.

Experts argue that effective protection requires two layers: AI-based screening to identify suspicious media and digital forensic analysis to verify evidence at the device level. Organisations lacking these safeguards risk serious legal and financial exposure as deepfake technology becomes more sophisticated.

Would you like to learn more about AI, tech and digital diplomacy? If so, ask our Diplo chatbot!