Dear all,

Policymakers were quite busy last week, proposing new laws, new strategies, and holding new consultations on laws and strategies. Actors reacting to some of these developments did not mince their words. But first, updates from the world of generative AI, as has become customary these days.

Let’s get started.

Stephanie and the Digital Watch team

// HIGHLIGHT //

The US White House’s approach to regulating AI:

Innovate, tackle risks, repeat

Generative AI tools, like ChatGPT, have reignited one of the most prominent dilemmas policymakers face: How to regulate this emerging technology without hindering innovation. The world’s three AI hotspots – the USA, the EU, and China – are each known for their distinct approaches to regulation: ranging from a hands-off attitude to strict regulation and enforcement frameworks.

Of the three, the USA has always favoured an innovation-first approach. It was this approach that helped a handful of American companies become the massive tech behemoths they are. But fast forward to 2023, a year that generative AI has hugely impacted: The ambience of today differs significantly from the atmosphere that enveloped Big Tech when they were just starting up.

US policymakers and top government officials have been sounding alarm bells over these risks in recent weeks. AI experts called for a moratorium on further development of generative AI tools: ‘We do not have the guardrails in place, the laws that we need, the public education, or the expertise in government to manage the consequences of the rapid changes that are now taking place,’ the chair of research institute Centre for AI and Digital Policy told the US Congress recently.

Despite all these warning bells, two developments last week signalled that the White House would continue favouring a guarded innovation-first approach as long as the risks are tackled.

The first was a high-level meeting between US Vice President Kamala Harris and the CEOs of Alphabet/Google, Anthropic, Microsoft, and OpenAI. According to the invitation the CEOs received, the aim was to have ‘a frank discussion of the risks we each see in current and near-term AI development, actions to mitigate those risks, and other ways we can work together’.

And the meeting, at which President Joe Biden also made a brief appearance, was exactly that. Harris told the CEOs that they needed to make sure their products were safe and secure before deploying them to the public, that they needed to mitigate the risks (to privacy, democratic values, and jobs), and that they needed to set an example for others, consistent with the US’ voluntary frameworks on AI.

In essence, the White House signalled that, for now, it has decided to trust that the companies will act responsibly, leaving it to Congress to figure out how tech companies can be held responsible. During a press call the day before, in reply to whether the administration ‘trust(ed) these companies to do that proactively given the history that we’ve seen in Silicon Valley with other technologies like social media’, the reply by senior administration officials was:

‘Clearly there will be things that we are doing and will continue to do in government, but we do think that these companies have an important responsibility. And many of them have spoken to their responsibilities. And, you know, part of what we want to do is make sure we have a conversation about how they’re going to fulfil those pledges.’

The second was the announcement of new measures ‘to promote responsible AI innovation’: funding to launch new research institutes; upcoming guidance on the use of AI by the federal government; and, interestingly, an endorsement of a red-teaming event at DEFCON 31 that will bring together AI experts, researchers, and students to dissect popular generative AI tools for vulnerabilities.

Why would the White House support a red-teaming event? First, because it’s a practical way of reducing the number of vulnerabilities and, therefore, limiting risks. Hackers will be able to experiment on jailbroken versions of the software, confidentially report vulnerabilities, and the companies will be given time to fix their software.

Second, it opens up the software to the scrutiny of the (albeit limited) public. Unless people know what’s really under the bonnet, they can’t report issues or help fix it.

Third, it’s low-hanging fruit for any approach that favours giving companies a free hand to innovate for now and taking other steps that do not involve heavy-handed regulation.

The question is not whether these steps will be enough. They’re not, as new AI tools will continue to be developed. Rather, it’s whether this guarded trust is misplaced and whether policymakers have learned from the past. As Federal Trade Commission chair Lina Khan wrote, ‘The trajectory of the Web 2.0 era was not inevitable — it was instead shaped by a broad range of policy choices. And we now face another moment of choice.’

// AI //

UK’s competition authority launches review of AI models

The UK’s Competition and Markets Authority (CMA) has kickstarted an initial review of how AI models impact competition and consumers. The focus is strictly on ensuring that AI models do not harm consumer welfare nor restrict competition in the market.

The CMA has called for public input (till 3 June). Depending on the findings, the CMA may consider regulatory interventions to address any anti-competitive issues arising from the use of AI models.

Why is this relevant? Because it contrasts with the steps the CMA’s counterpart across the pond – the Federal Trade Commission – pledged to take.

// CABLES //

NATO warns of potential Russian threat to undersea pipelines and cables

NATO’s intelligence chief David Cattler has warned that there is a ‘significant risk’ that Russia could attack critical infrastructure in Europe or North America, such as internet cables and gas pipelines, as part of its conflict with the West over Ukraine.

The ability to undermine the security of Western banking, energy, and internet networks is becoming a tremendous strategic advantage for NATO’s opponents, Cattler said.

Why is this relevant? Apart from the warning itself, the comments came a day before NATO’s Secretary General Jens Stoltenberg met with industry leaders to discuss the security of critical undersea infrastructure. Undoubtedly, the security of the Nord Stream pipeline’s surrounding region was on the agenda.

// SENDER-PAYS //

Stakeholders oppose fair share fee

A coalition of stakeholders has come together to publicly caution against the potential implementation of a fair share fee imposed on content providers who would be obliged to pass it on to telecom companies.

The group, which includes NGOs, cloud associations, and broadband service providers, was reacting to a consultation launched by the European Commission in February. It’s not the consultation itself they’re worried about, but any misleading conclusions the consultations might lead to.

Why is this relevant? First, the signatories to the statement think there’s ‘no evidence that a real problem’ exists. Second, they say the fee would be a potential violation of the net neutrality principle – a principle that the EU has staunchly protected.

// MEDIA //

Google and Meta voice opposition to Canada’s online news bill

The battle over Canada’s proposed online news bill continues. In last week’s hearing by the Senate’s Standing Committee on Transport and Communications, both Google and Meta said that they would have to withdraw from Canada should the proposed bill pass as it stands now.

One of the main issues is that the bill obliges companies to pay news publishers for linking to their sites, ‘making us lose money with every click’, according to Google’s vice-president for news, Richard Gingras.

Why is this relevant? Because Google and Meta have repeated their threat that they’re ready to leave if the bill isn’t revised.

// PRIVACY //

EU’s top court rules on two GDPR cases

In the first – Case C-487/21 – the Court of Justice of the EU clarified that the right of access under the GDPR entitles individuals to obtain a faithful reproduction of their personal data. That can mean entire documents, if there’s personal data on each page.

In the second – Case C-300/21 – the court confirmed that the right to compensation under the GDPR is subject to three conditions: infringement of the GDPR, material or non-material damages resulting from the infringement, and a causal link between the damage and the infringement. But violation of the GDPR alone does not automatically entitle the claimant to compensation. That’s up to national laws to determine.

Was this newsletter forwarded to you, and you’d like to see more?

// IPR //

EU Commission releases non-binding recommendation to combat online piracy of live events

The European Commission has opted for a non-binding strategy to combat the piracy of live events, generating dissatisfaction among both lawmakers and rightsholders.

The measure outlines several recommendations for national authorities, rightsholders, and intermediary service providers to tackle the issue of live event piracy more effectively, but its non-binding nature falls short of what is required to address the issue.

Why is this relevant? Because the European Commission went ahead with its plans despite not one but two complaints from a group of parliamentarians calling for a legislative instrument to counter online piracy.

// AUTONOMOUS CARS //

China publishes draft standards for smart vehicles

China’s Ministry of Industry has published a series of draft technical standards for autonomous vehicles (Chinese) – developed within a National Automobile Standardisation Technical Committee – that will address cybersecurity and data protection issues. The public can comment till 5 July.

One of the standards requires that data generated by autonomous vehicles be stored locally. The government wants to ensure that any sensitive data stays within China’s borders.

Another standard will require autonomous vehicles to be equipped with data storage equipment to allow data to be retrieved and analysed in the case of an accident. Reminds us of flight data recorder black boxes.

9–12 May: The Women in Tech Global Conference, in hybrid format, will bring women active in the technology sector together to discuss their perspectives on tech leadership, gender parity, digital economy, and more.

10 May: Last day for feedback on two open consultations: The European Commission’s single charger draft rules and China’s proposed regulation for generative AI tools (Chinese).

10–12 May: UNCTAD’s Intergovernmental Group of Experts on E-commerce and the Digital Economy meets in Geneva for its sixth session.

11 May: In the European Parliament, the joint committee (IMCO/LIBE) vote on the report on the Artificial Intelligence Act takes place today.

For more events, bookmark the observatory’s calendar of global policy events.

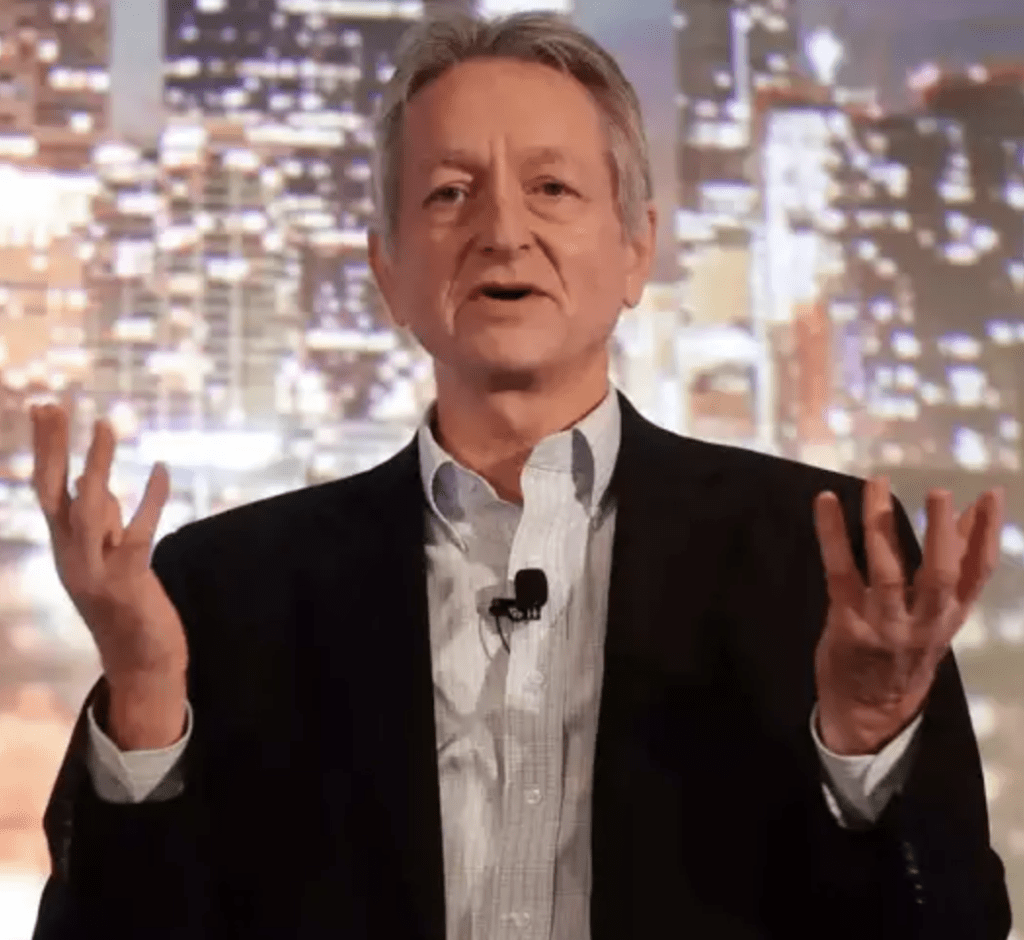

AI an urgent threat, says AI pioneer

AI pioneer Geoffrey Hinton, who turned 75 in December and who recently resigned from Google, tells news portal Reuters that AI could pose a ‘more urgent’ threat to humanity than climate change. In another interview with the Guardian, he says there’s no simple solution.

Starlink arrives in Africa, but South Africa left behind

Starlink, the satellite internet constellation developed by Elon Musk’s SpaceX, has started operating in Nigeria, Mozambique, Rwanda, and Mauritius over the past few months, with 19 more African countries scheduled for launch this year and the next. But South Africa is notably missing from this list. Could this be due to South Africa’s foreign ownership rule, which grants licences only to companies with at least 30% South African ownership of the company seeking to operate there? A Ventures Africa contributor investigates.

Was this newsletter forwarded to you, and you’d like to see more?